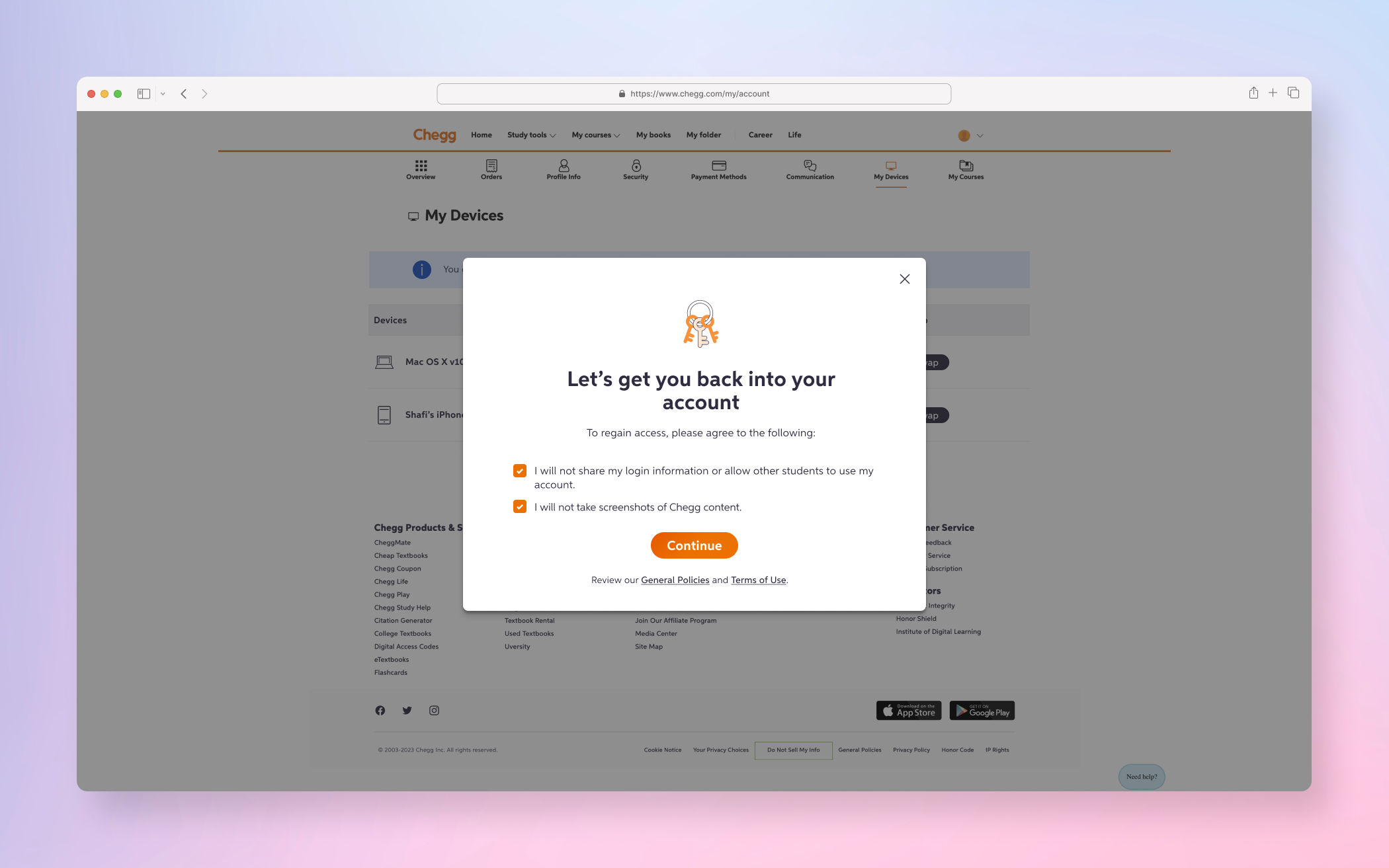

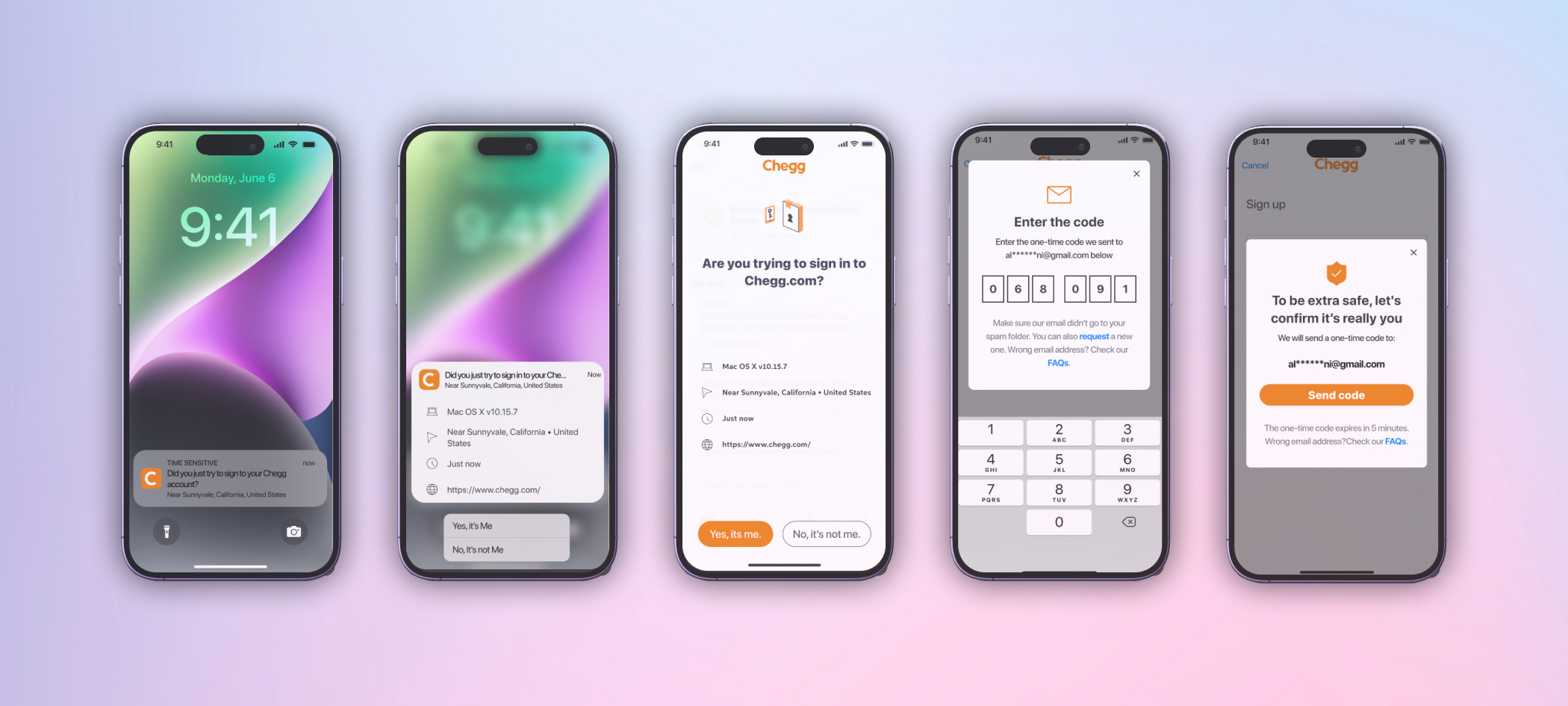

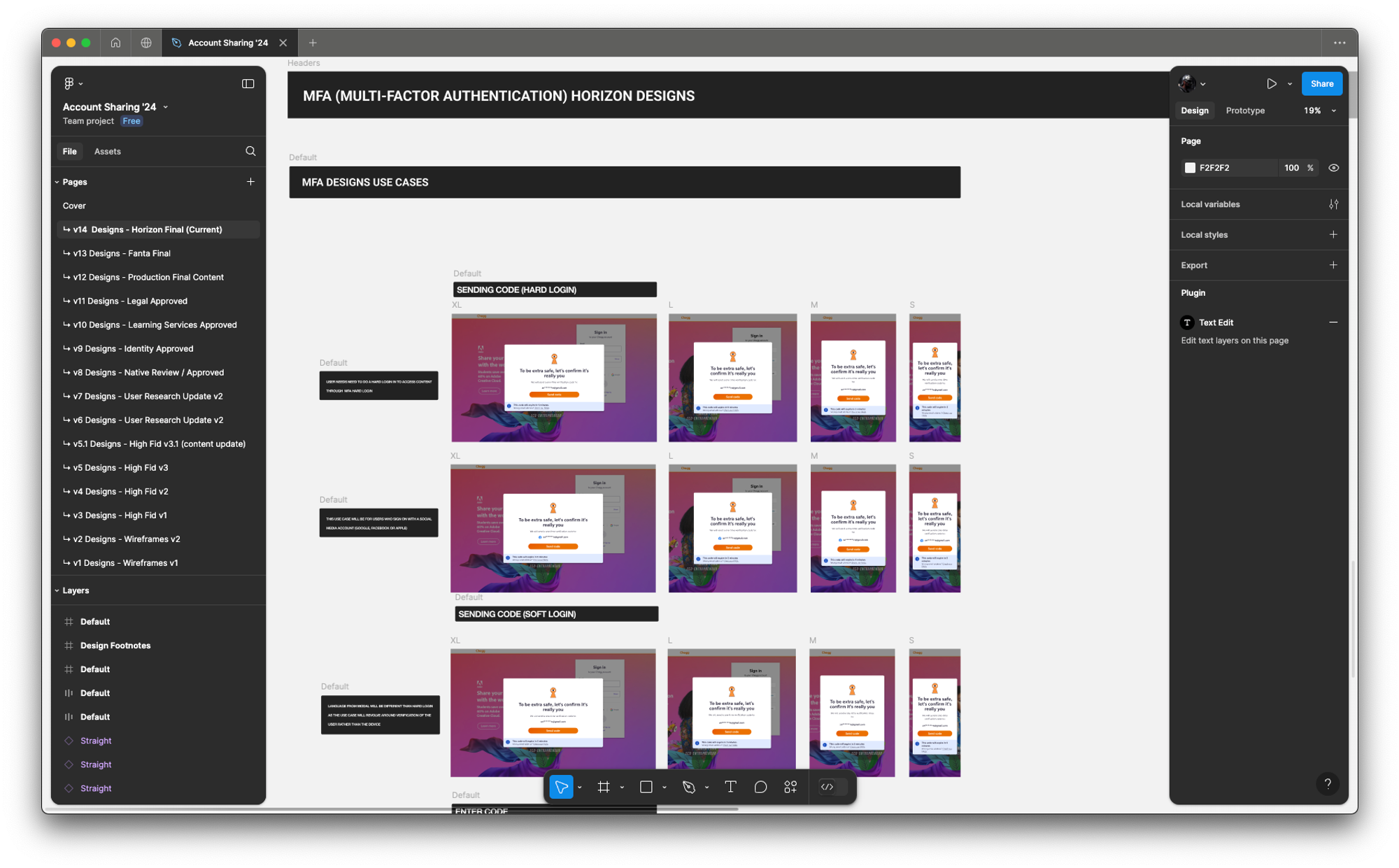

FINAL PRODUCT

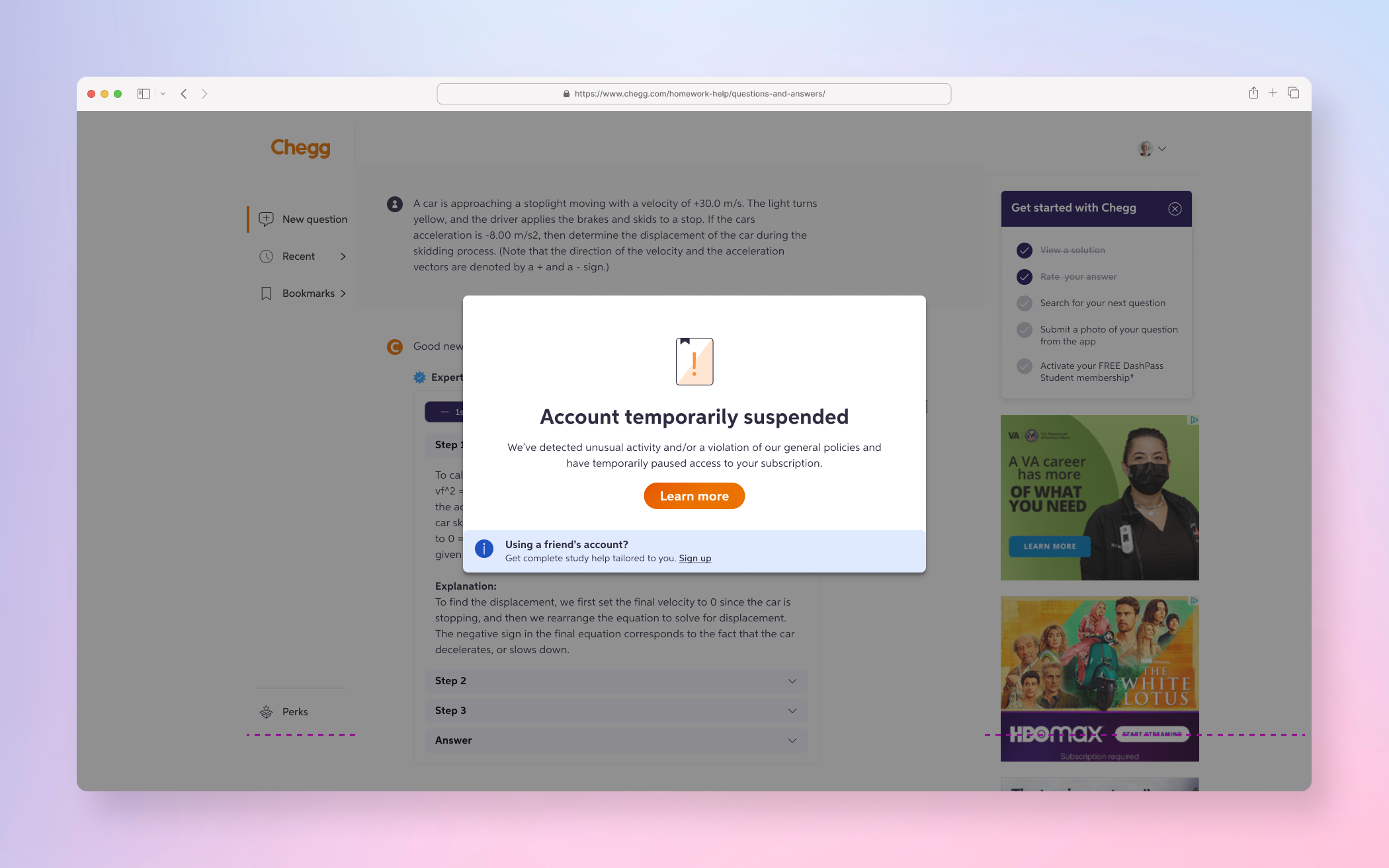

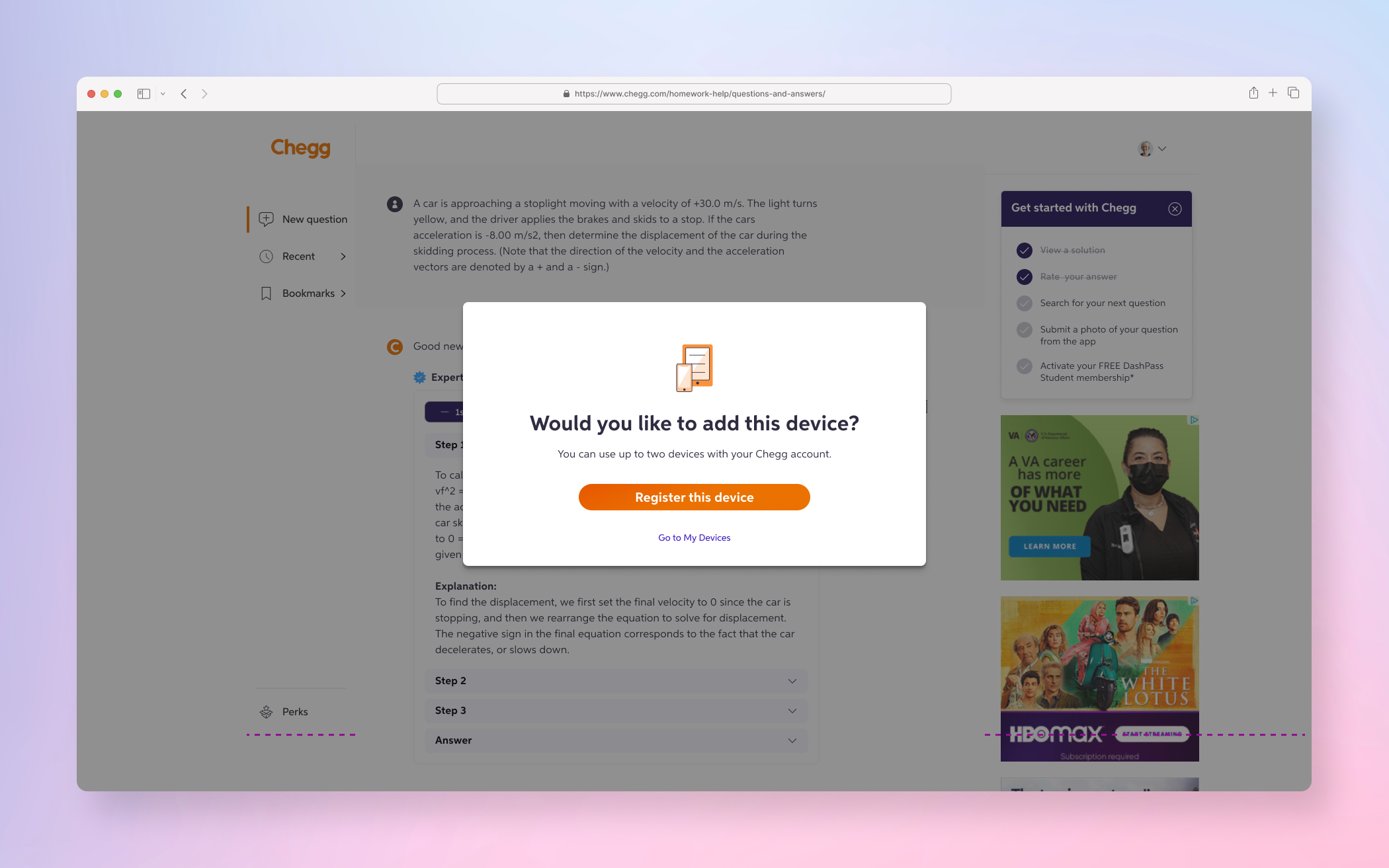

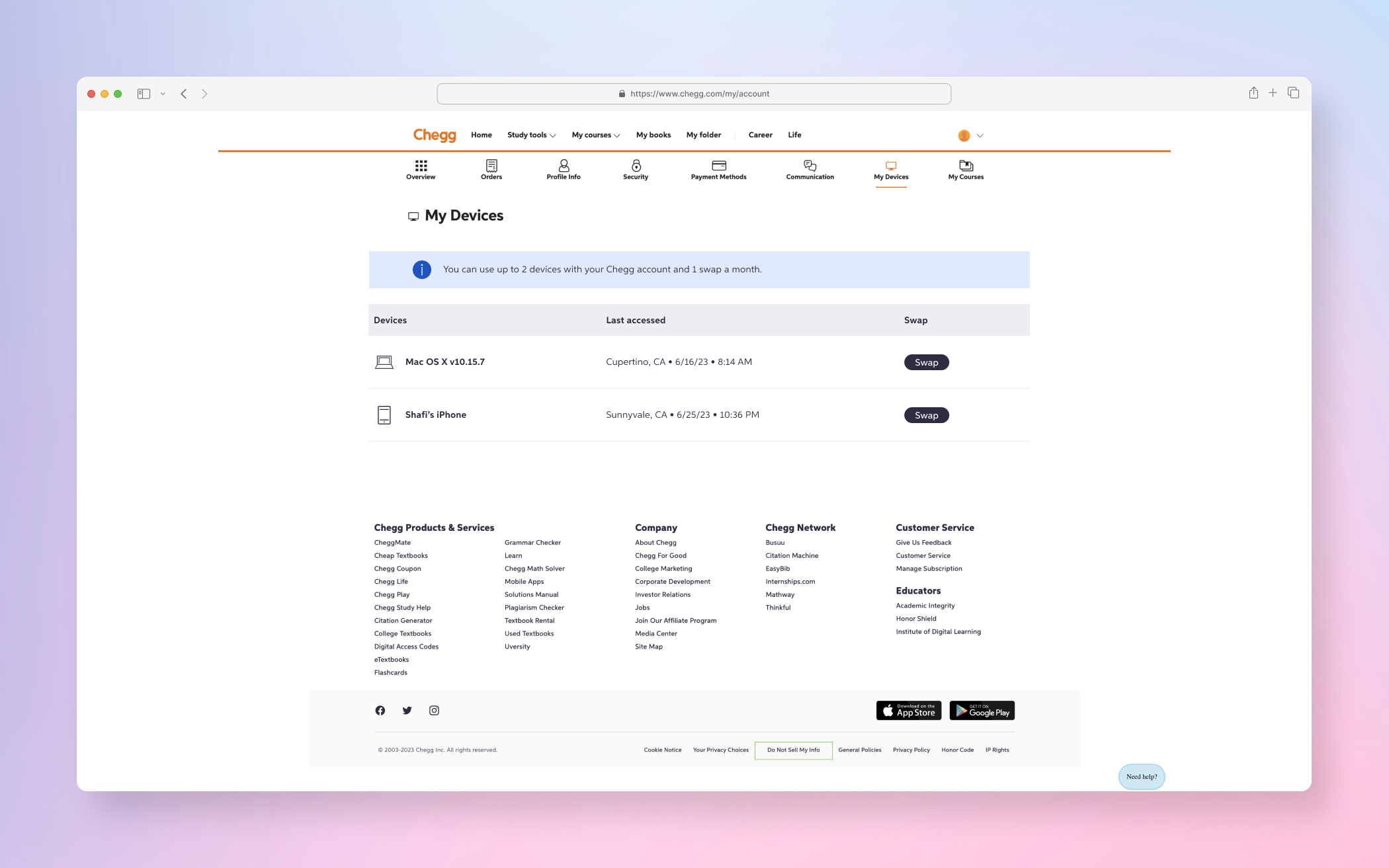

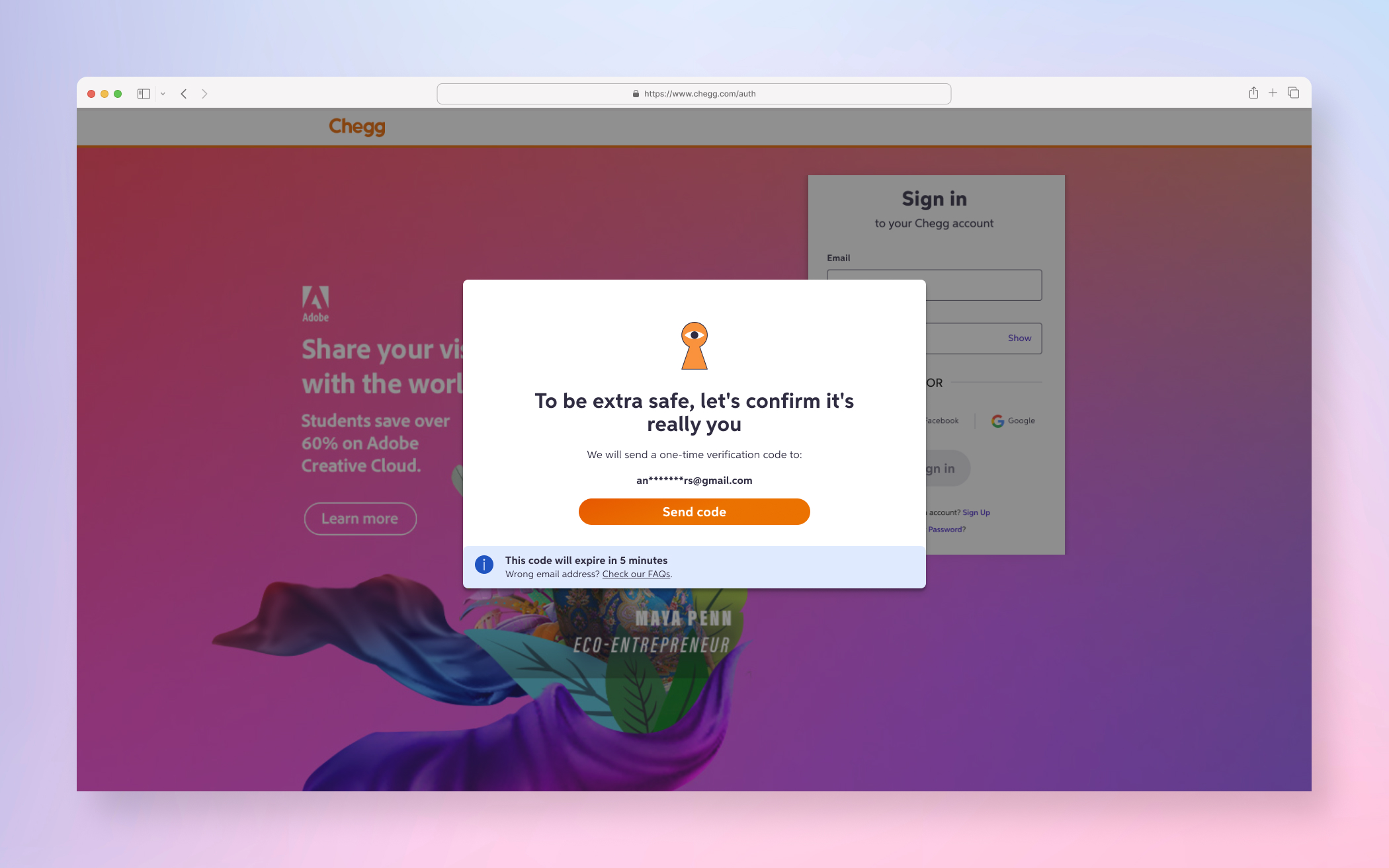

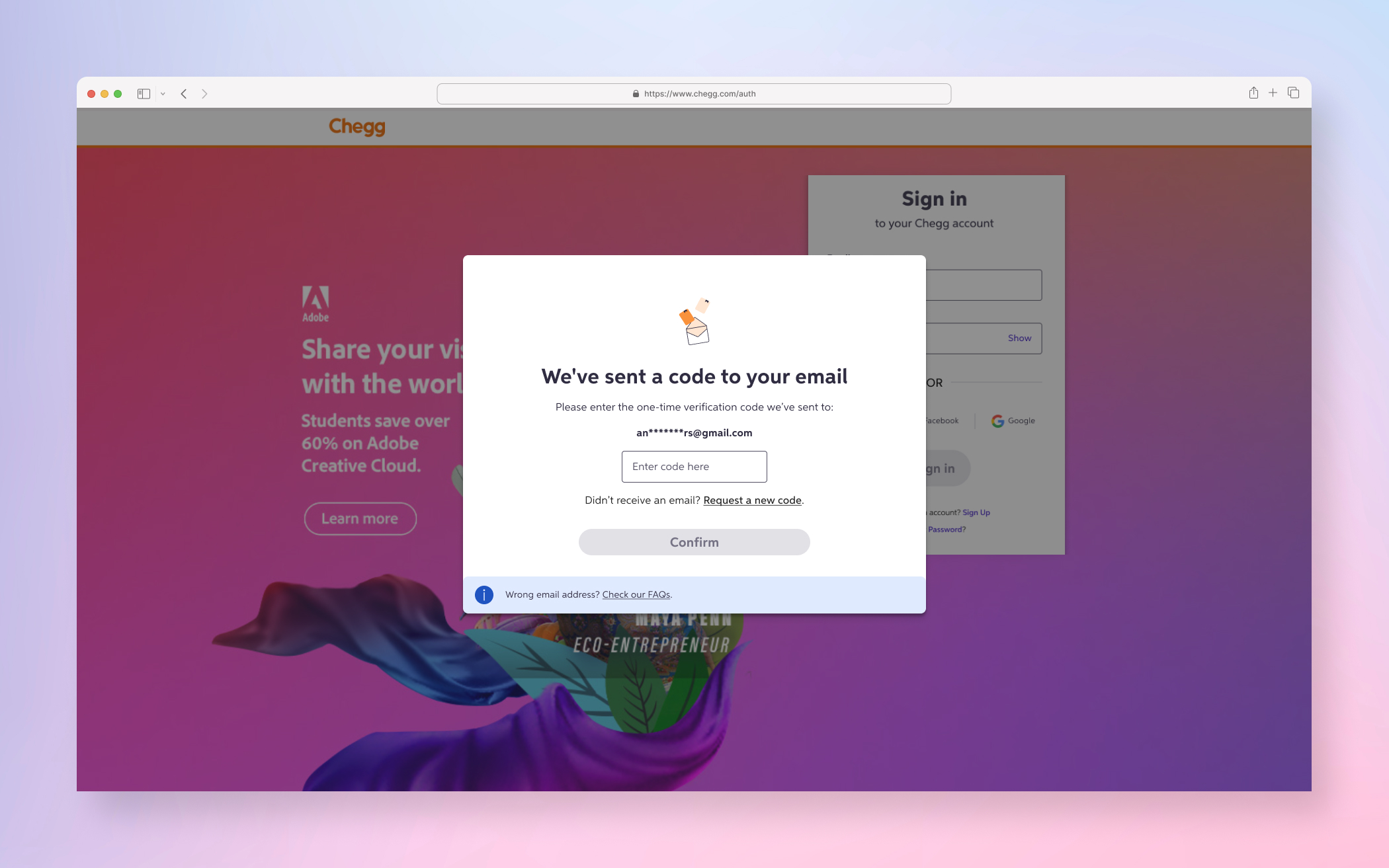

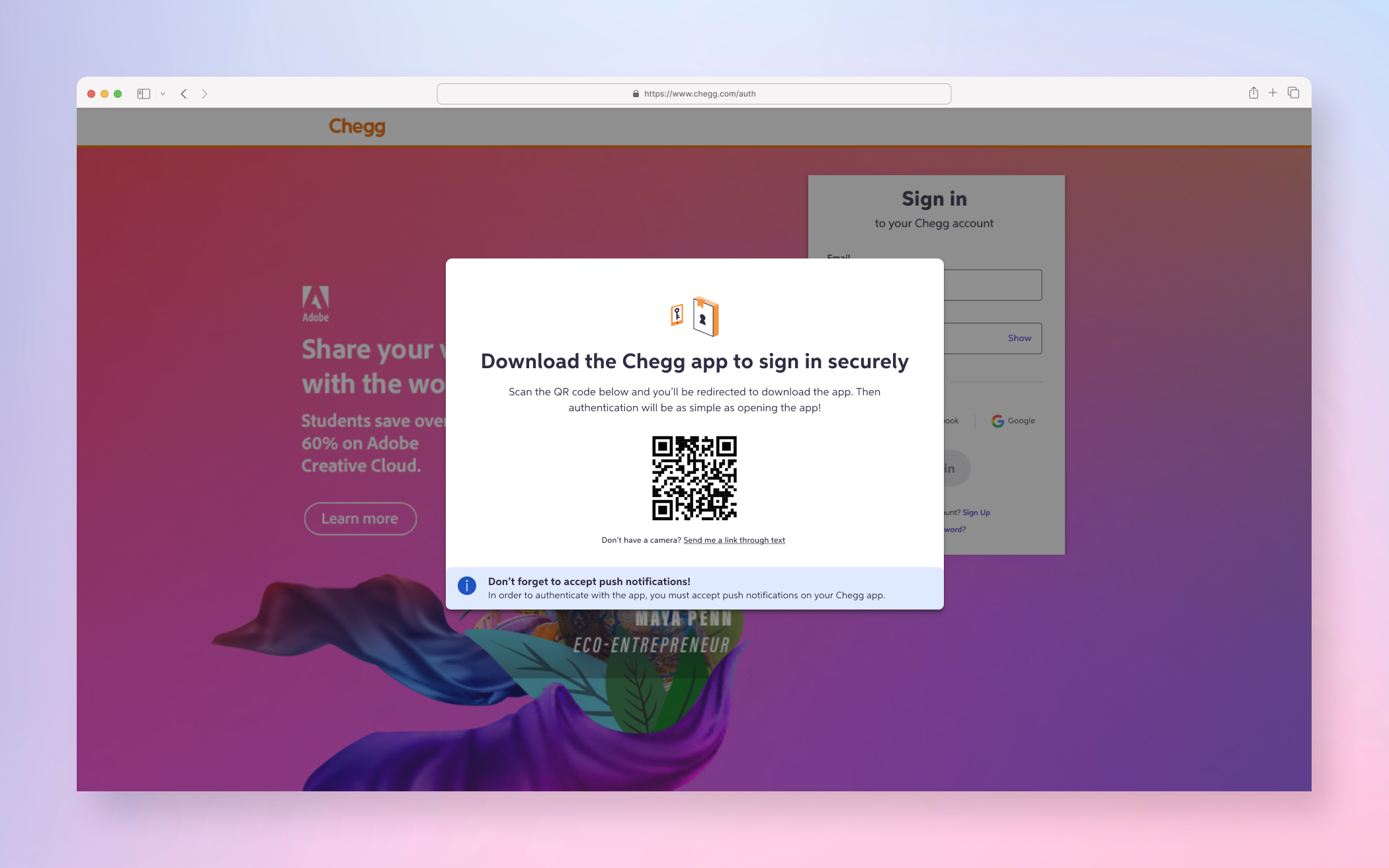

A few examples screens of the Service Abuse initiative ranging from Detention, Device Management and MFA(s).

DISCOVERY PHASE

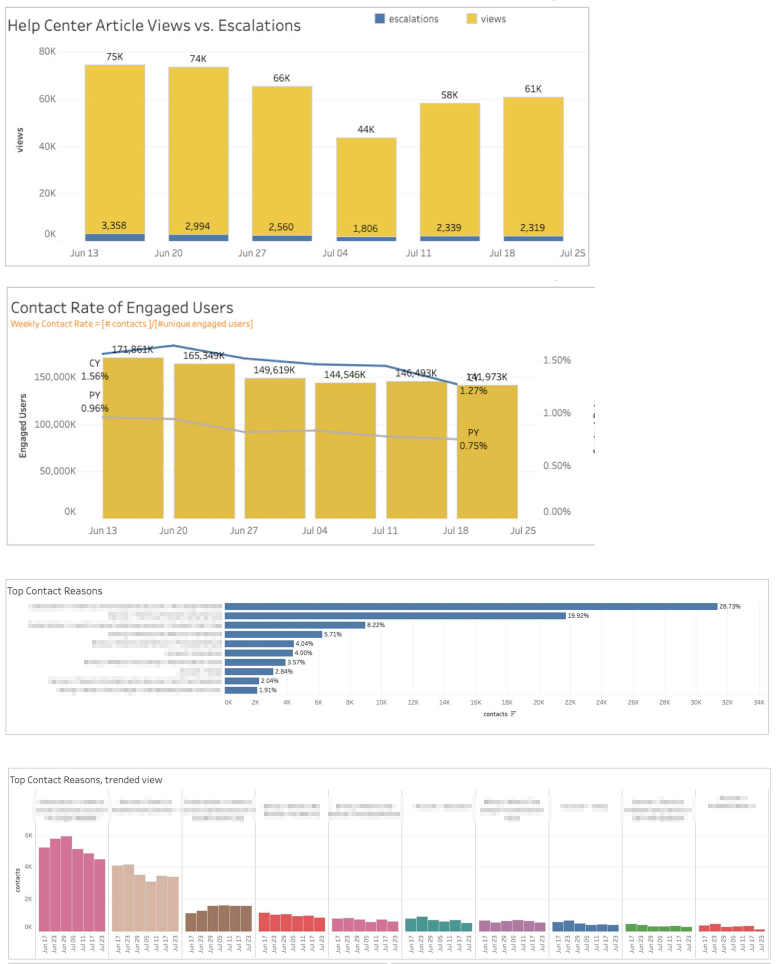

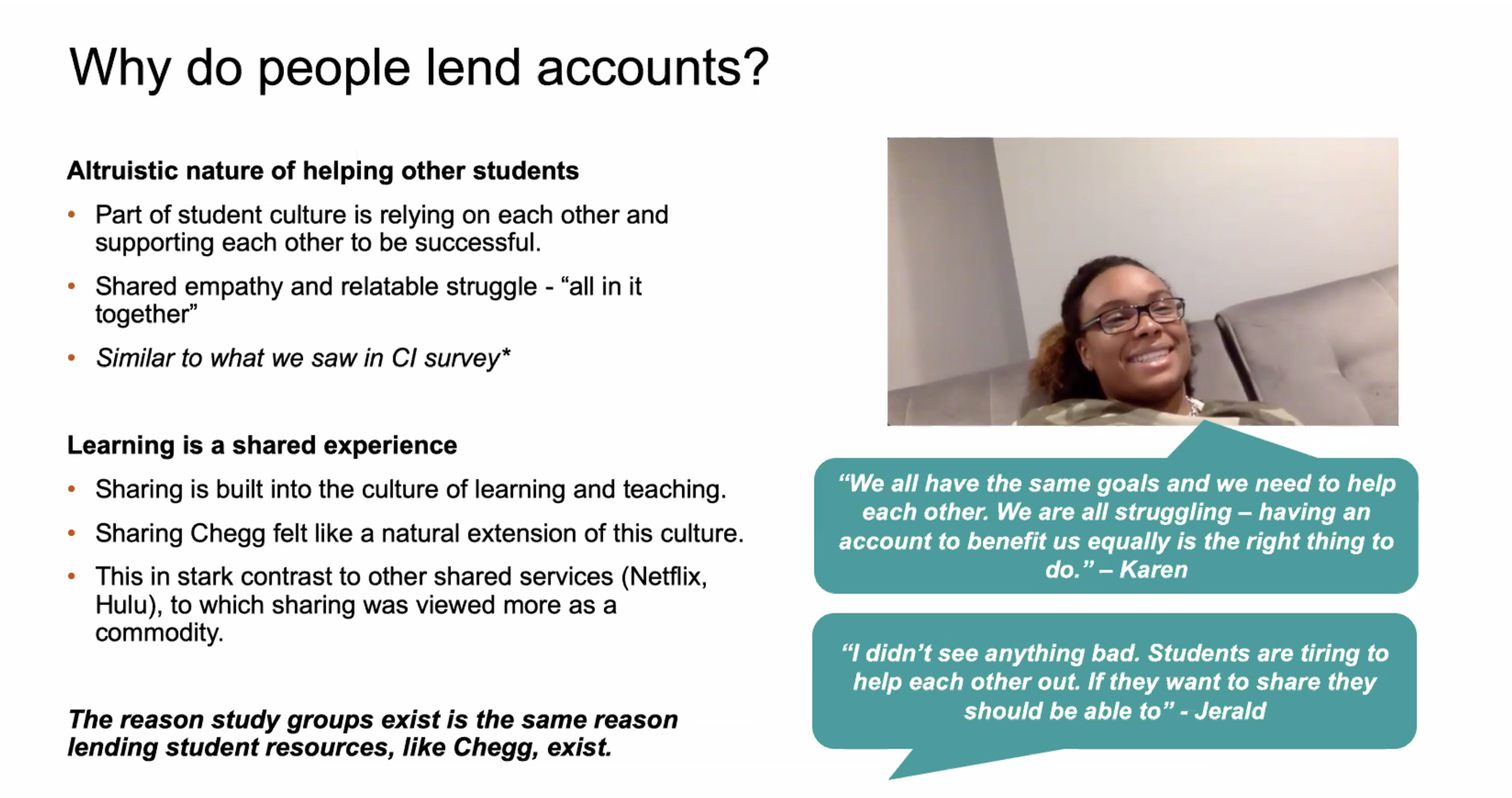

The first step of the Discovery Phase was the understanding and gathering process which began with a lot of quant data deep dive and customer service direct feedback from users. It was important to validate our hypotheses and to understand user sentiment.

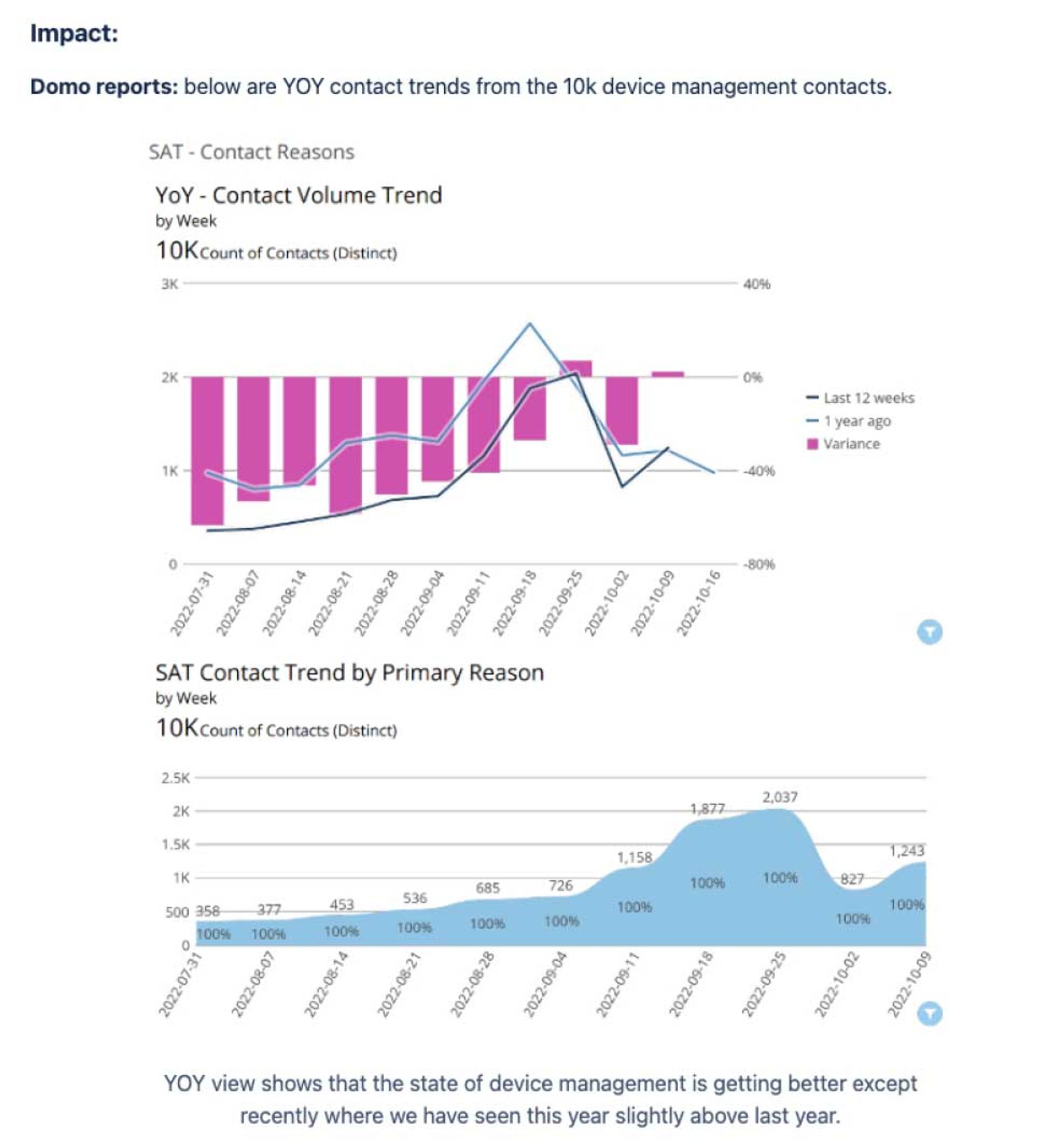

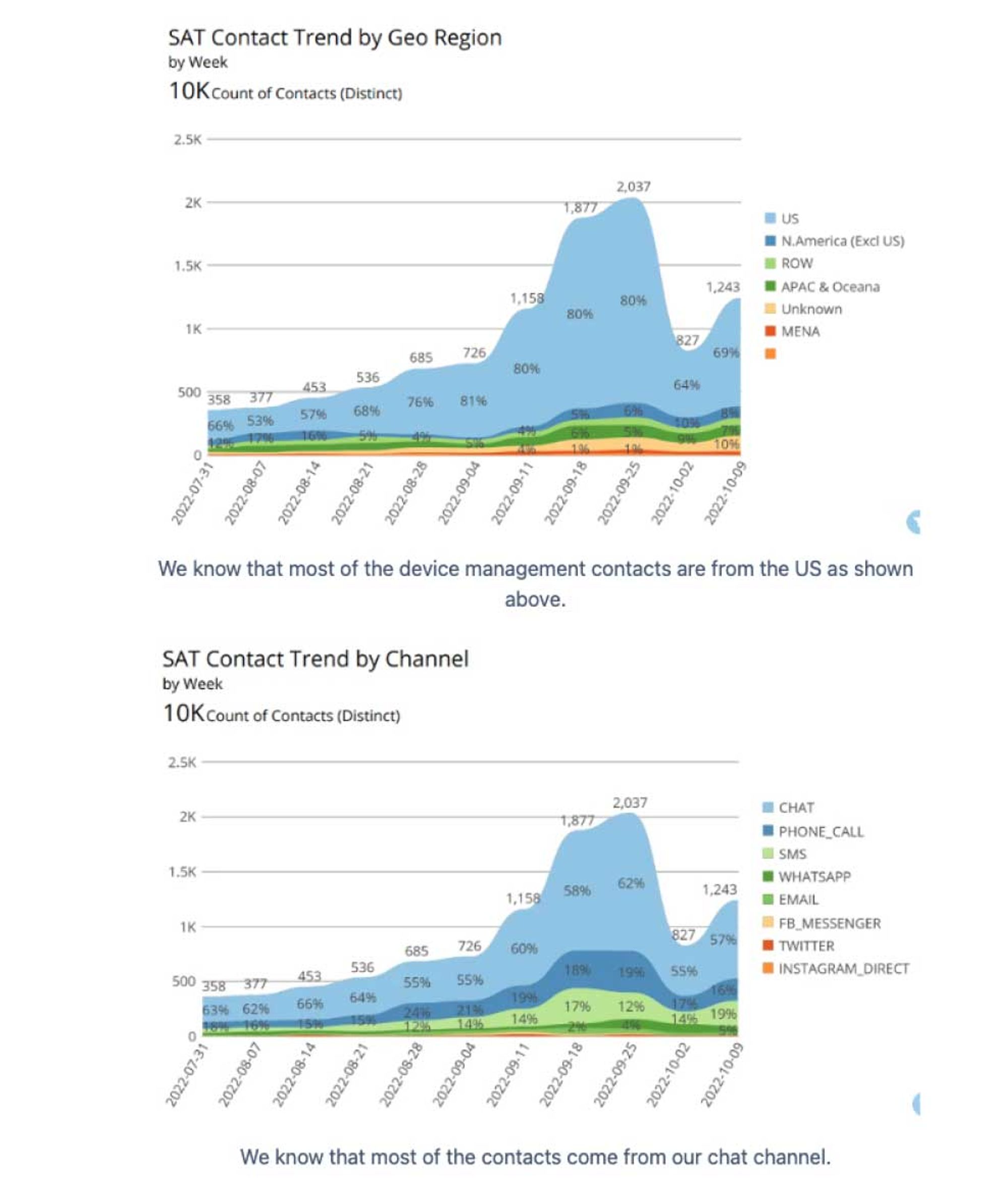

Quantitative Data (deep dive into the quant data to try and validate the anomalies hypothesized)

↓

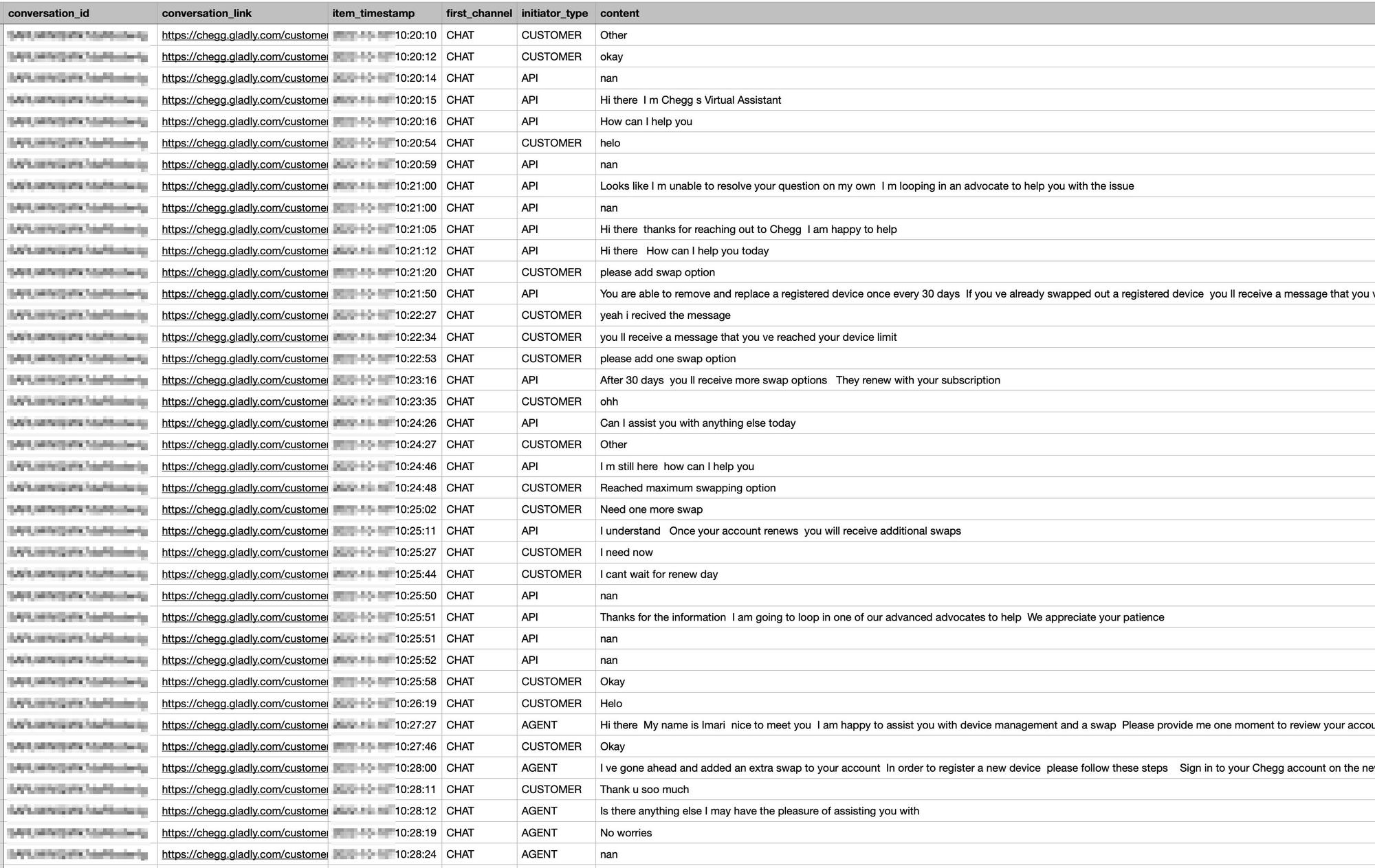

Customer Service Team Insights/User Feedback (collected all the verbal feedback from student advocate interviews, scrubbed through dozens of pages of call/chat logs, interviews, etc)

↓

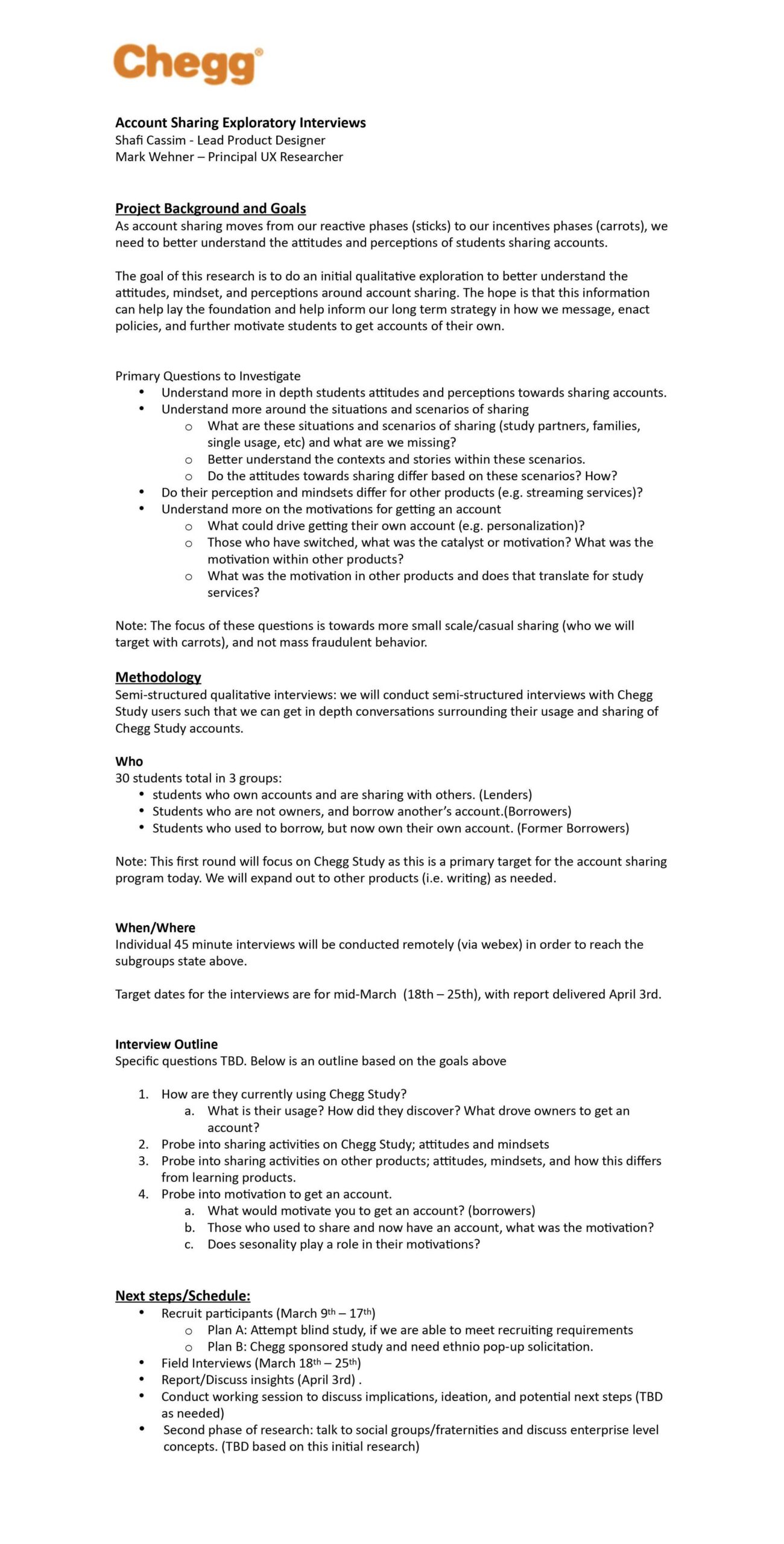

Research Plan & Outcome (wrote the exploratory research plan and worked with Researcher on execution plan — then shared research outcome with team & stakeholders)

↓

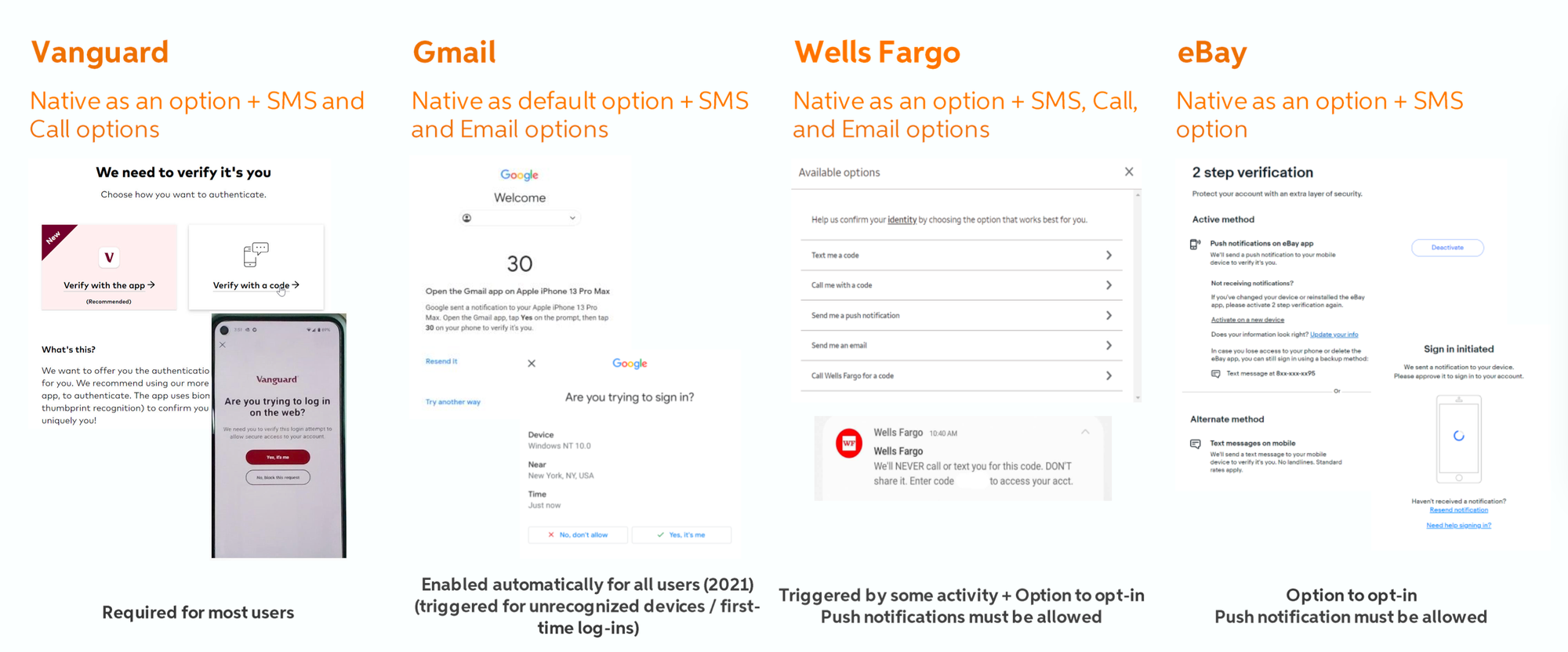

Competitive Analysis Did a robust design/architecture/product competitive analysis of how other products handle account sharing, Device Management and MFA.

↓

IDEATE PHASE

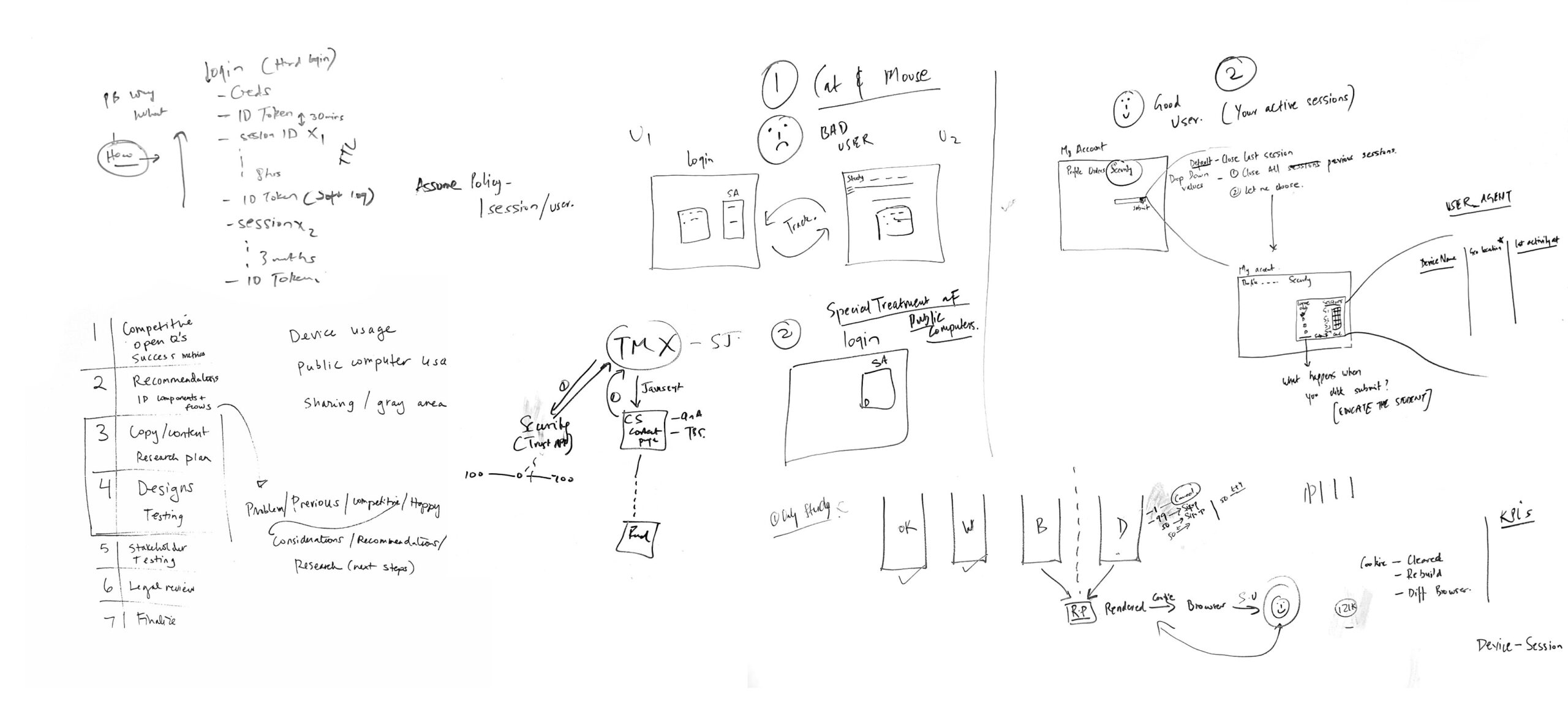

There was an ideation phase for each of the phases of Account Sharing. It consisted of numerous items such as whiteboarding, user flows, wireframes, competitive analysis, high fidelity and prototypes.

Whiteboarding First thing I did was get in a room with the PM and lead architect on the project and we white boarded all the use cases and talked through any constraints or dependencies

↓

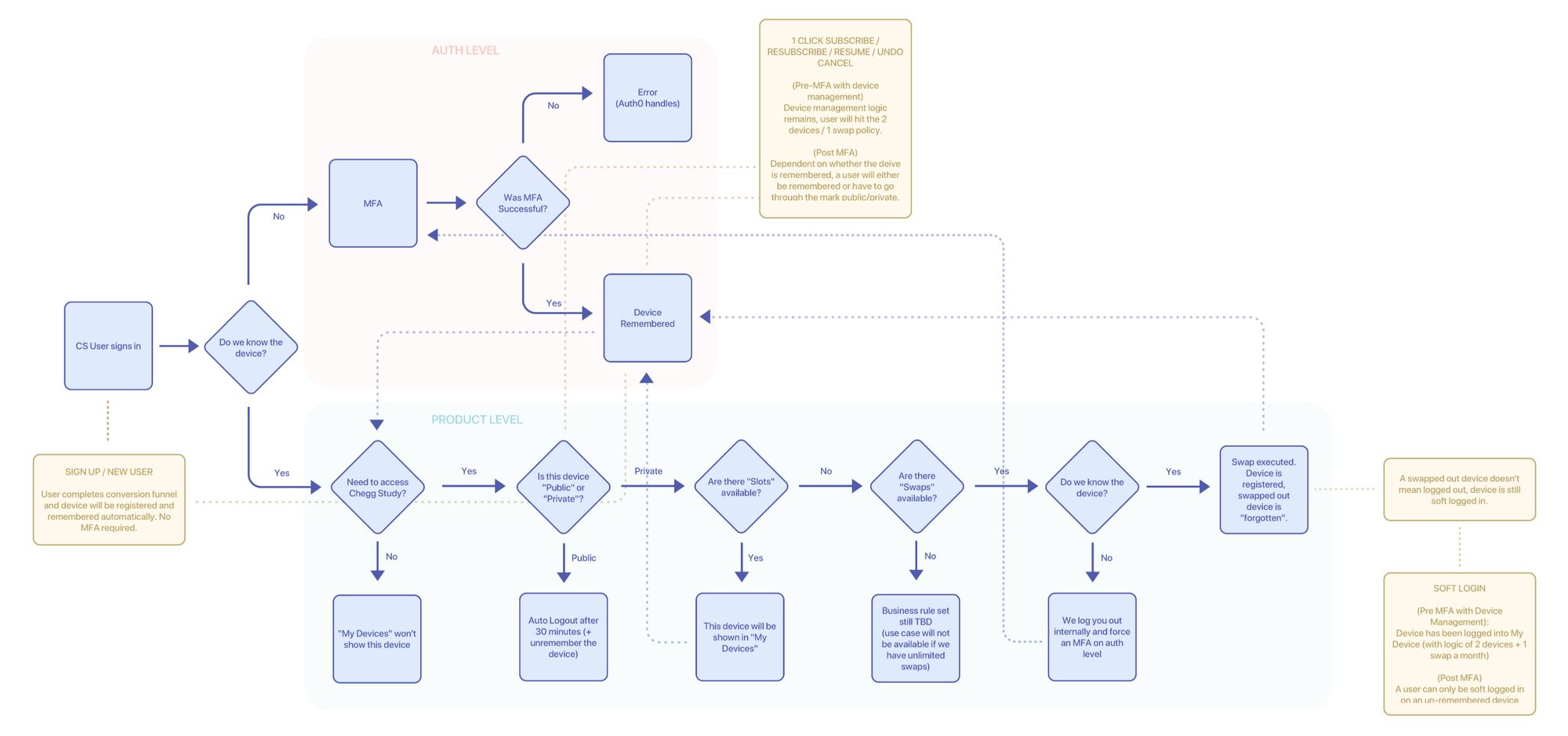

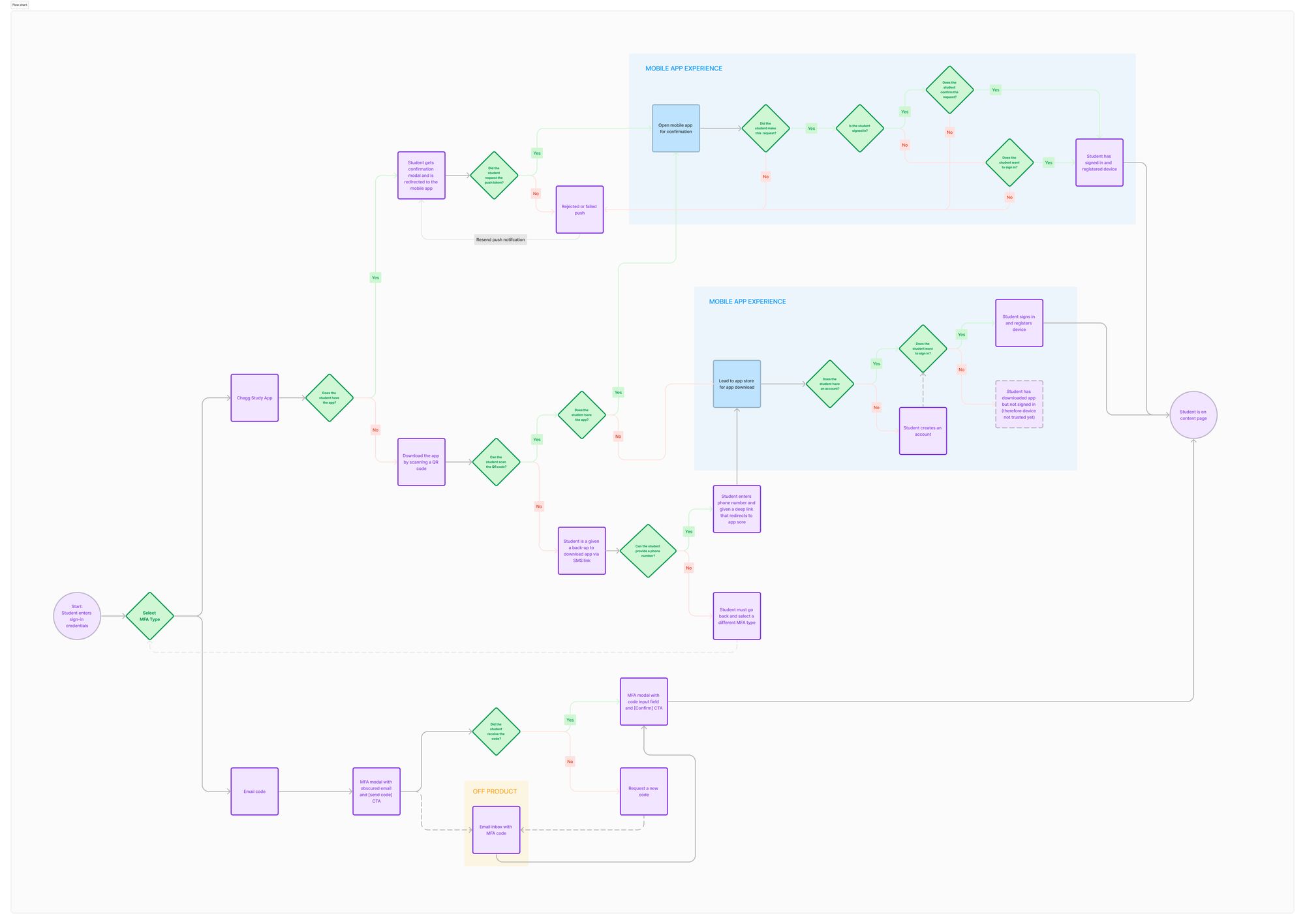

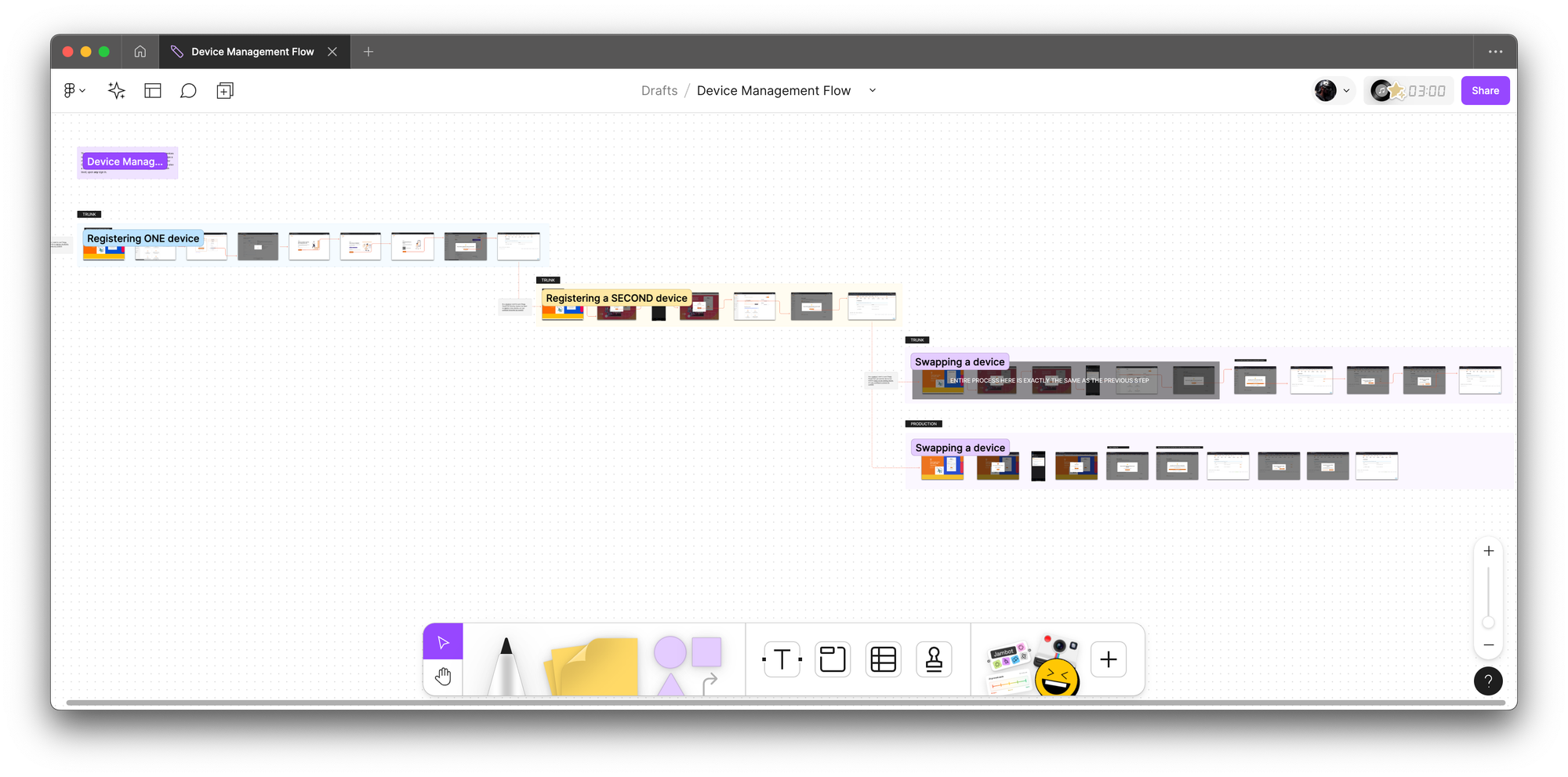

Eng Flows (following white boarding sessions, I created engineering flows to break down and understand the logic further. This part is particularly important for me because platform logic tends to be extremely complicated and nuanced. Below are examples of device management and MFA via app)

↓

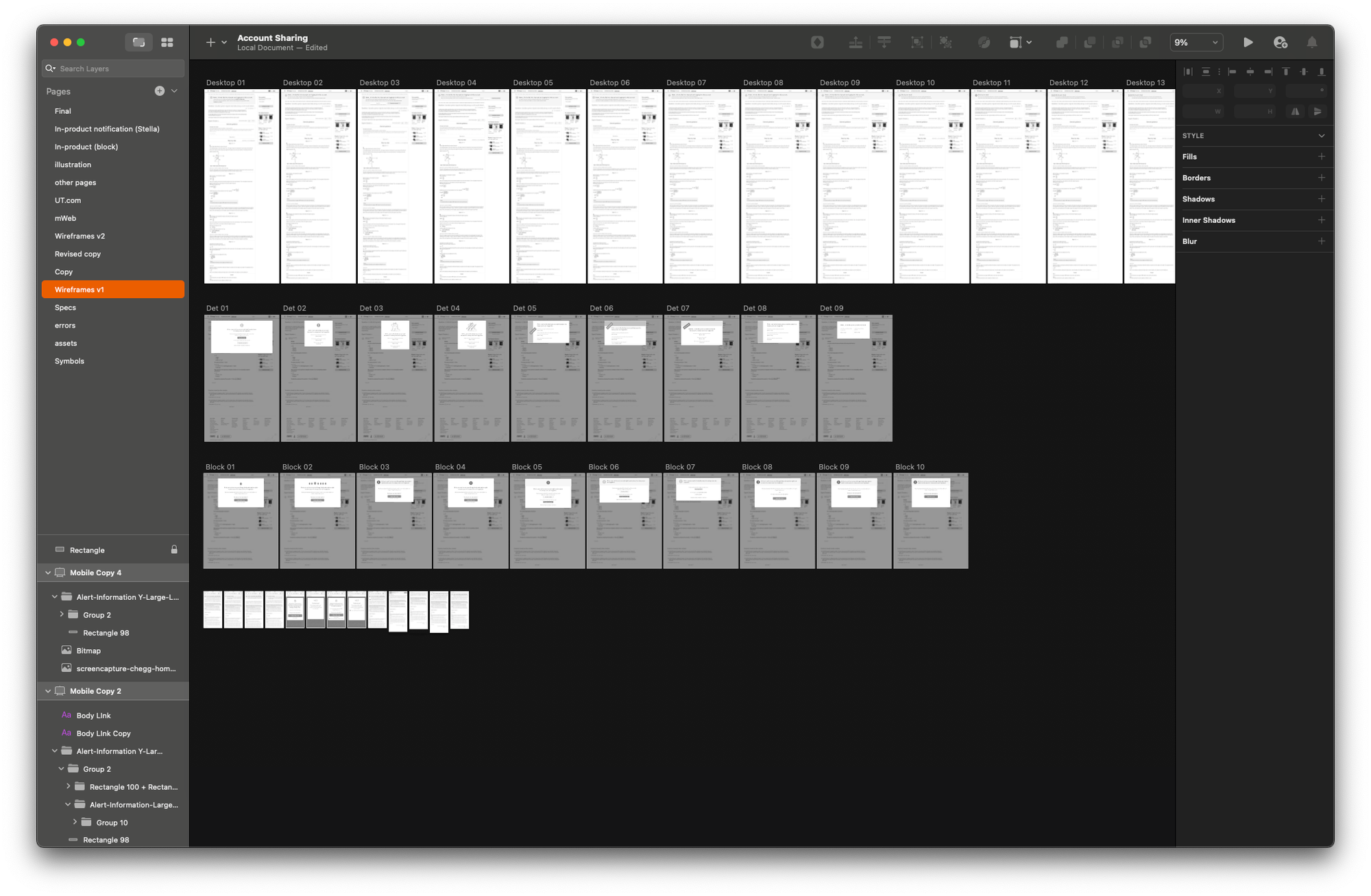

Wireframes (I then went through multiple low fidelity wireframes which I shared with the team often and early to solicit feedback and get alignment)

↓

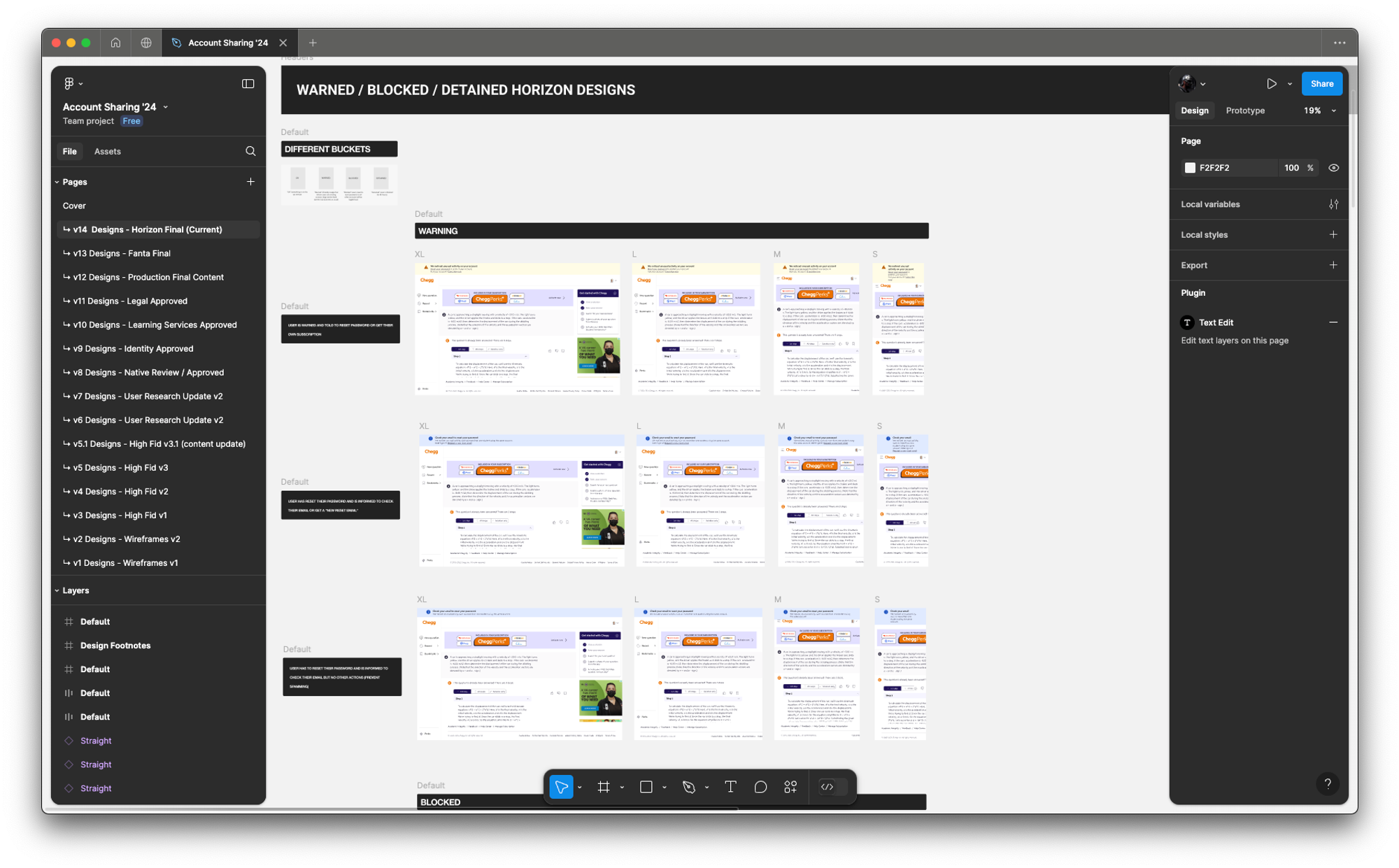

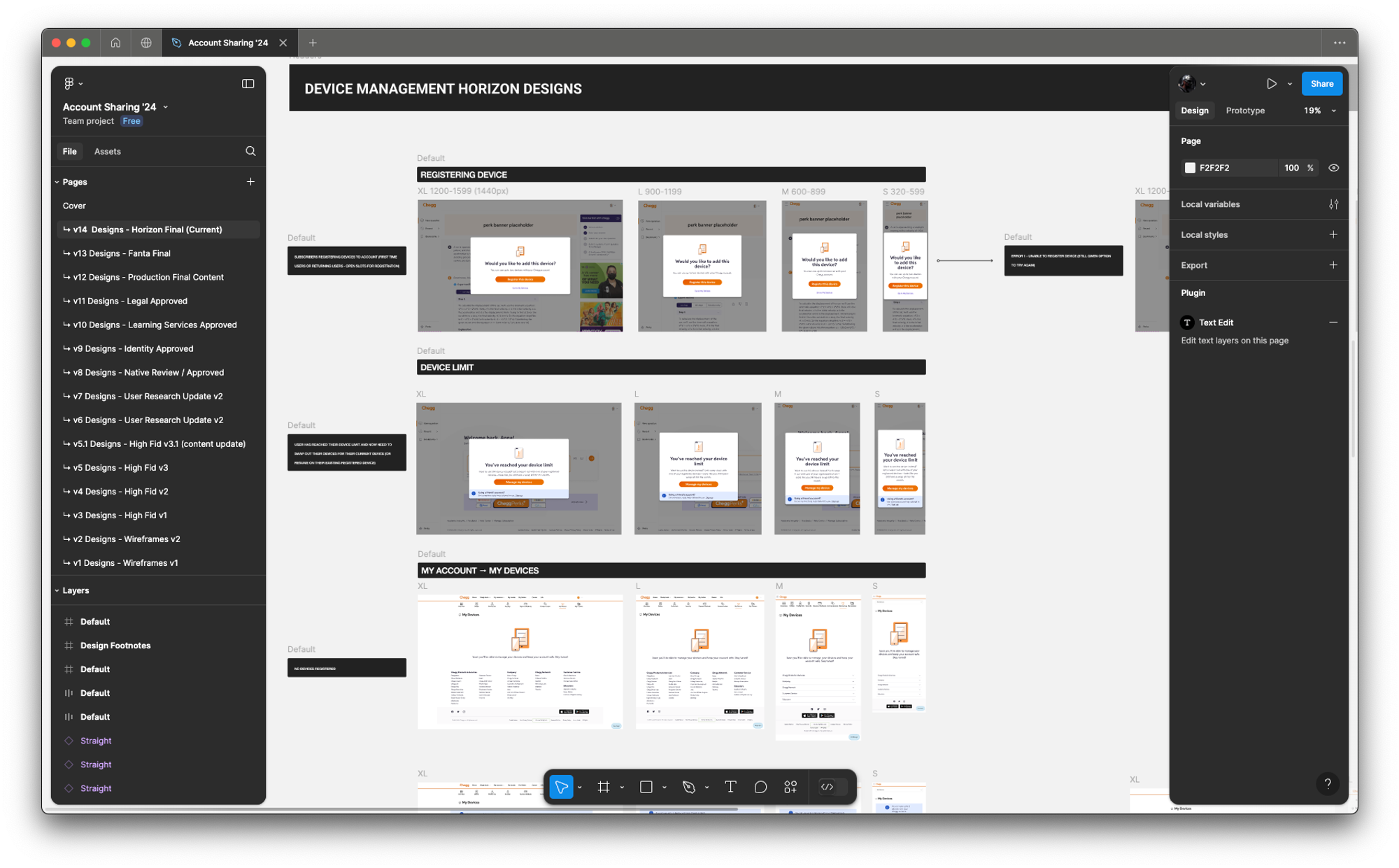

High Fidelity (once we locked the UX in low fidelity mode, I moved on to high fidelity designs where the focus was more on design systems & Chegg-UI components with rapid feedback and iteration sessions)

↓

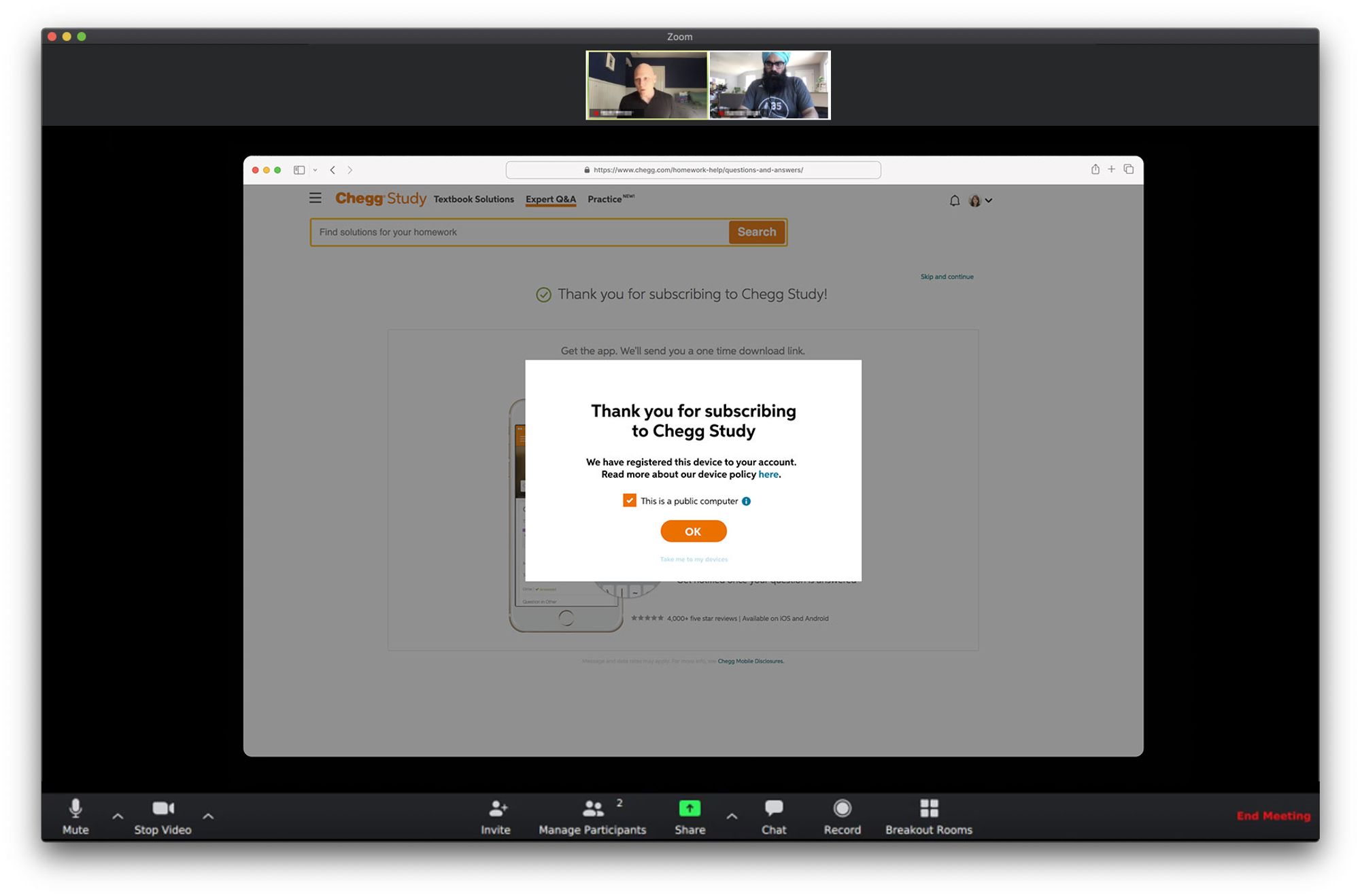

Prototype & User Testing (after locking on the direction and final UI, I put together some prototypes in Framer which we put in front of users, both in moderated testing as well as unmoderated testing to get more signals)

↓

REFINE PHASE

After refining the UX in wireframes and putting all the UIs into high fidelity designs, I then garnered feedback internally (with immediate product team, stakeholders and design critiques) and made final revisions for handoffs. This included a last round of feedback from content design and legal to prep for our UXQA and dogfooding sessions.

Internal Feedback (final stakeholder steering committee shareout for feedback with Figjam)

↓

Final Design Revisions (some example final designs)

↓

RESPONSIBILITIES

- Research (wrote initial exploration research plan and worked with Researcher for qual sessions)

- UX

- UI

- Interaction

WHAT IS IT?

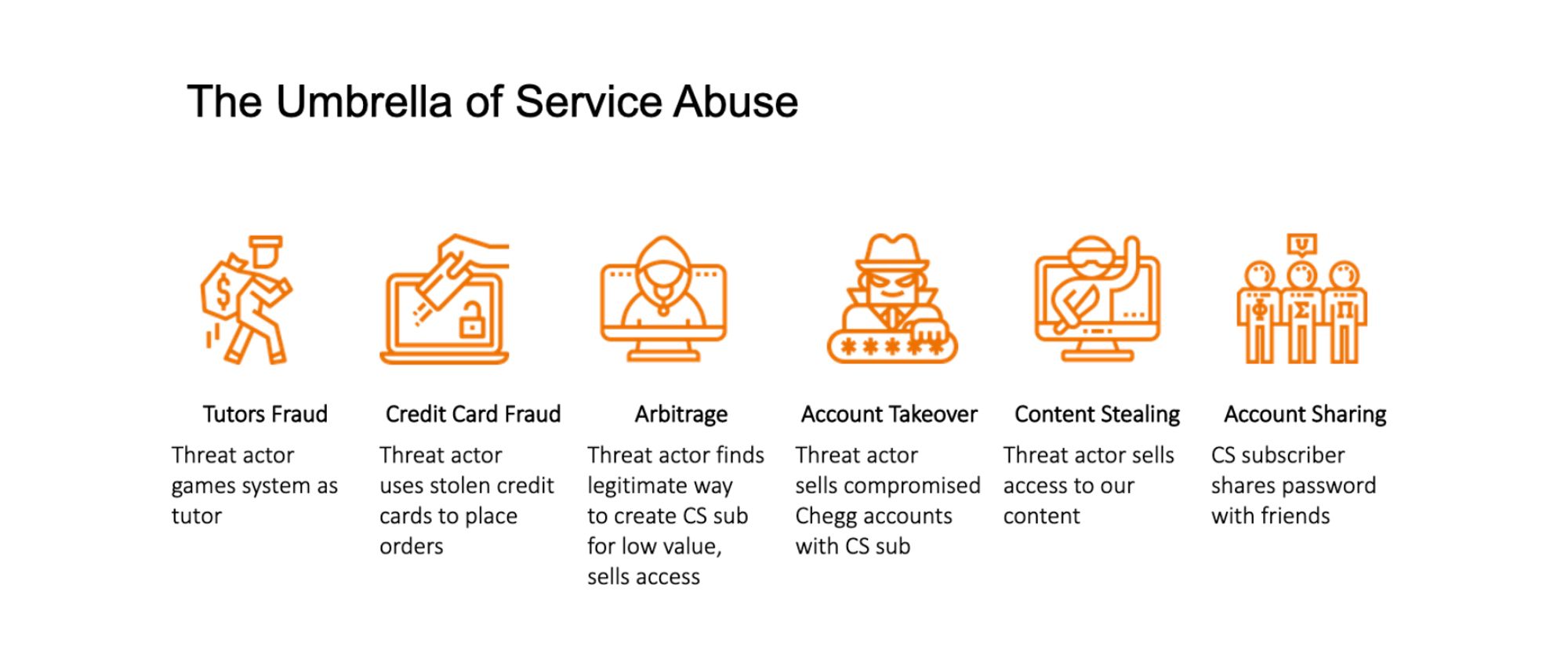

Chegg faced a growing issue of service abuse, where users shared accounts, resold access, or exploited security vulnerabilities, leading to revenue loss and customer dissatisfaction. This not only undermined the platform’s business model but also eroded trust, as legitimate users faced account takeovers, interruptions, and security concerns. The challenge was to implement effective deterrents without creating excessive friction for paying customers.

WHAT IS THE PROBLEM?

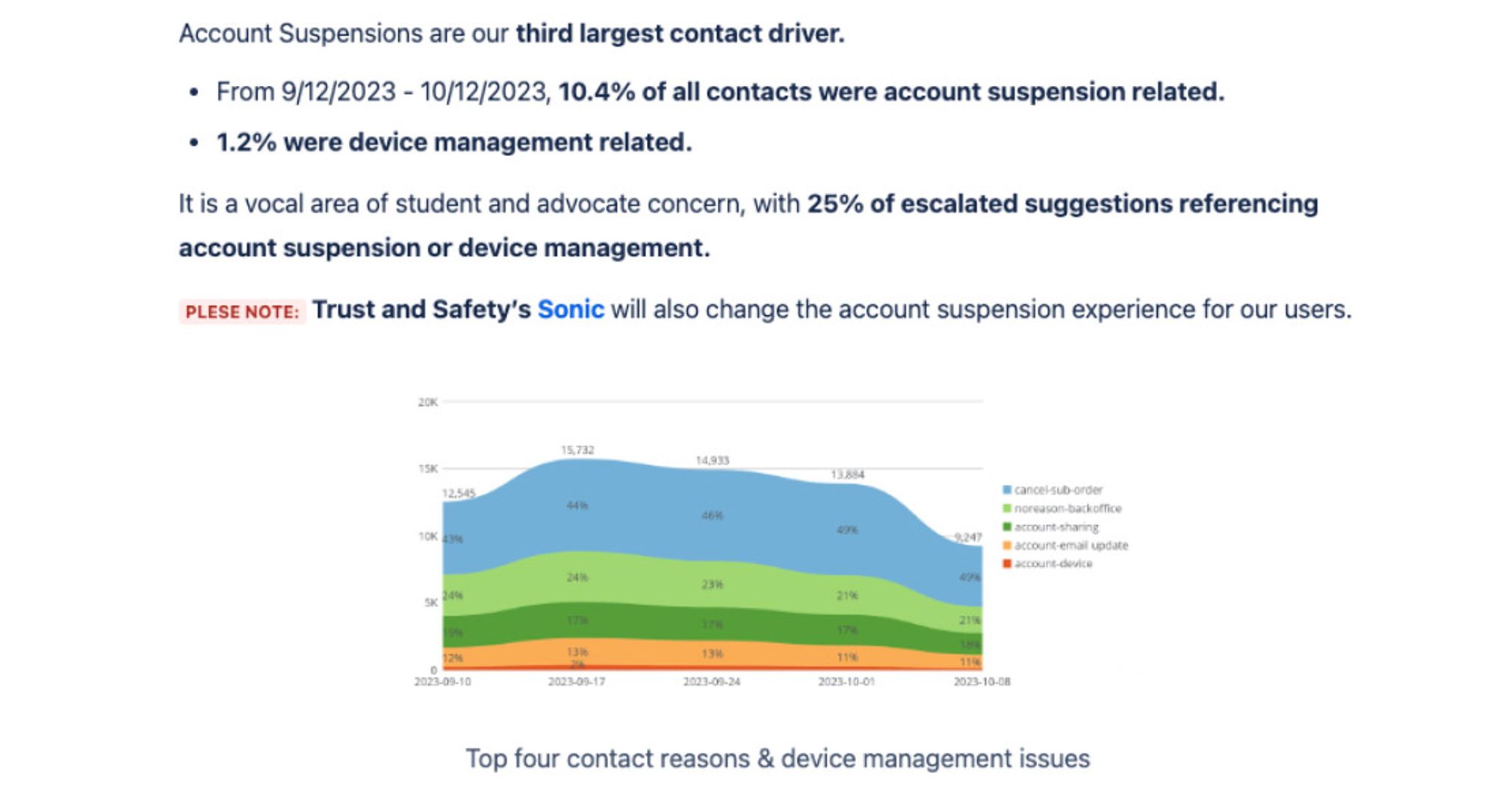

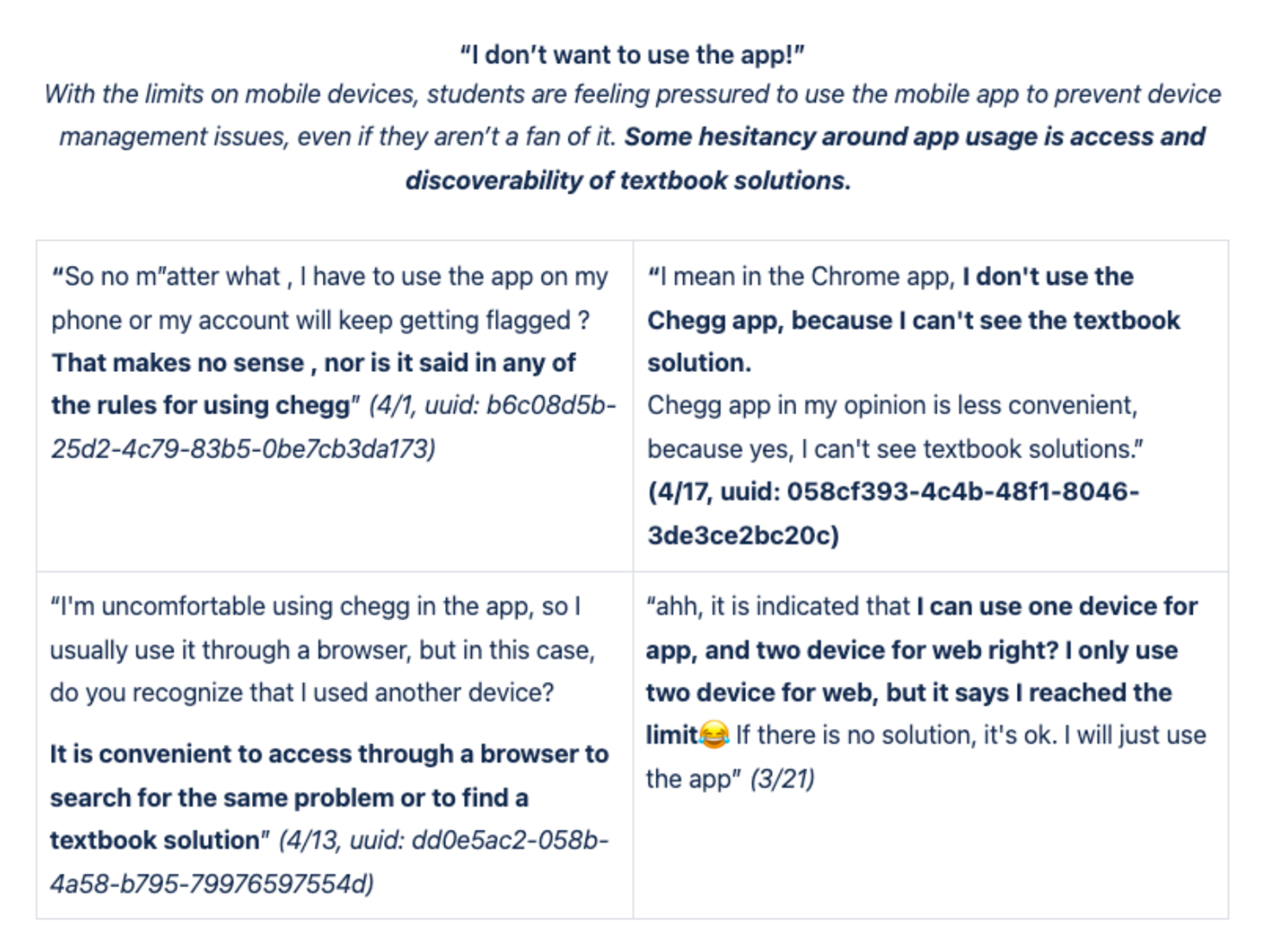

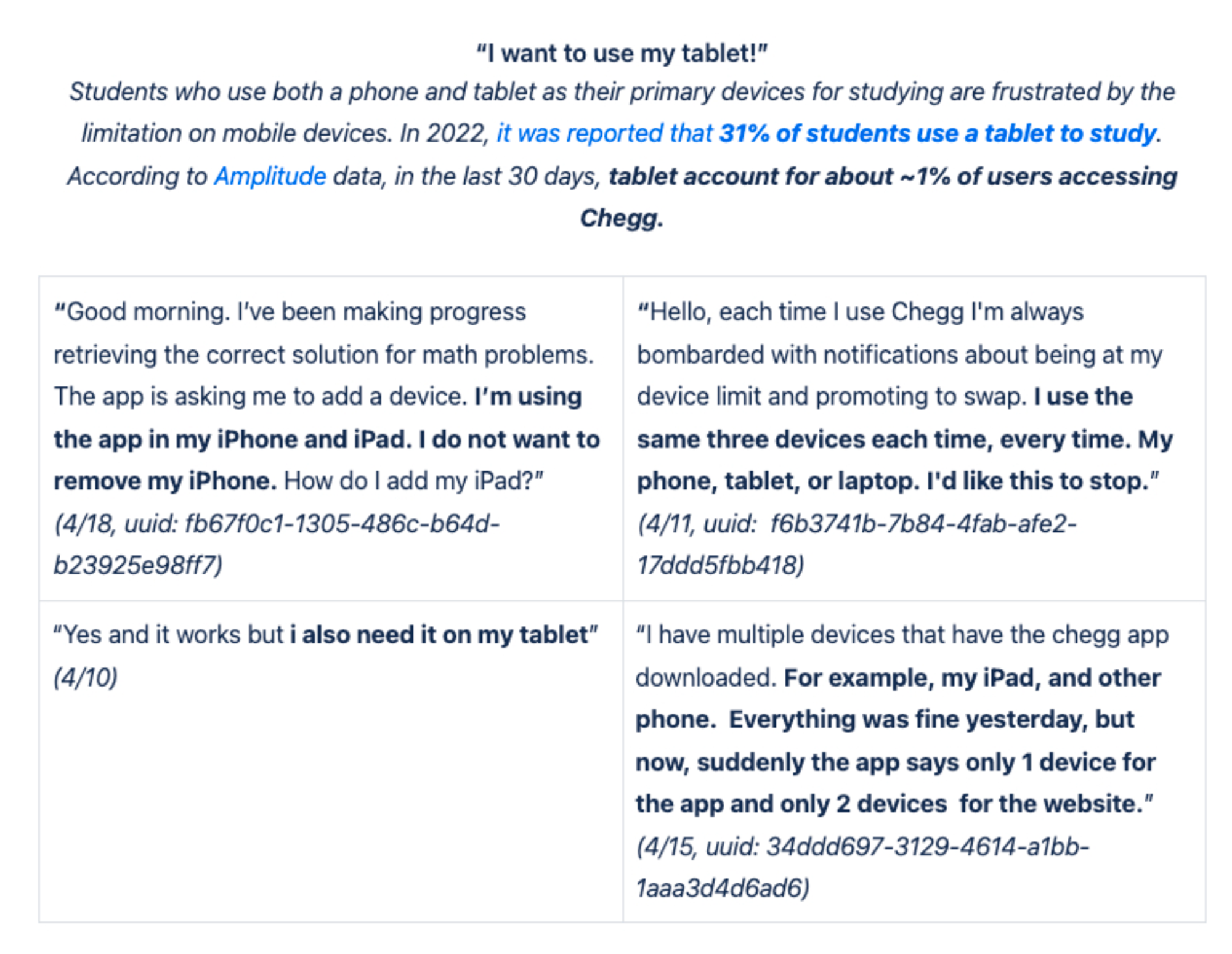

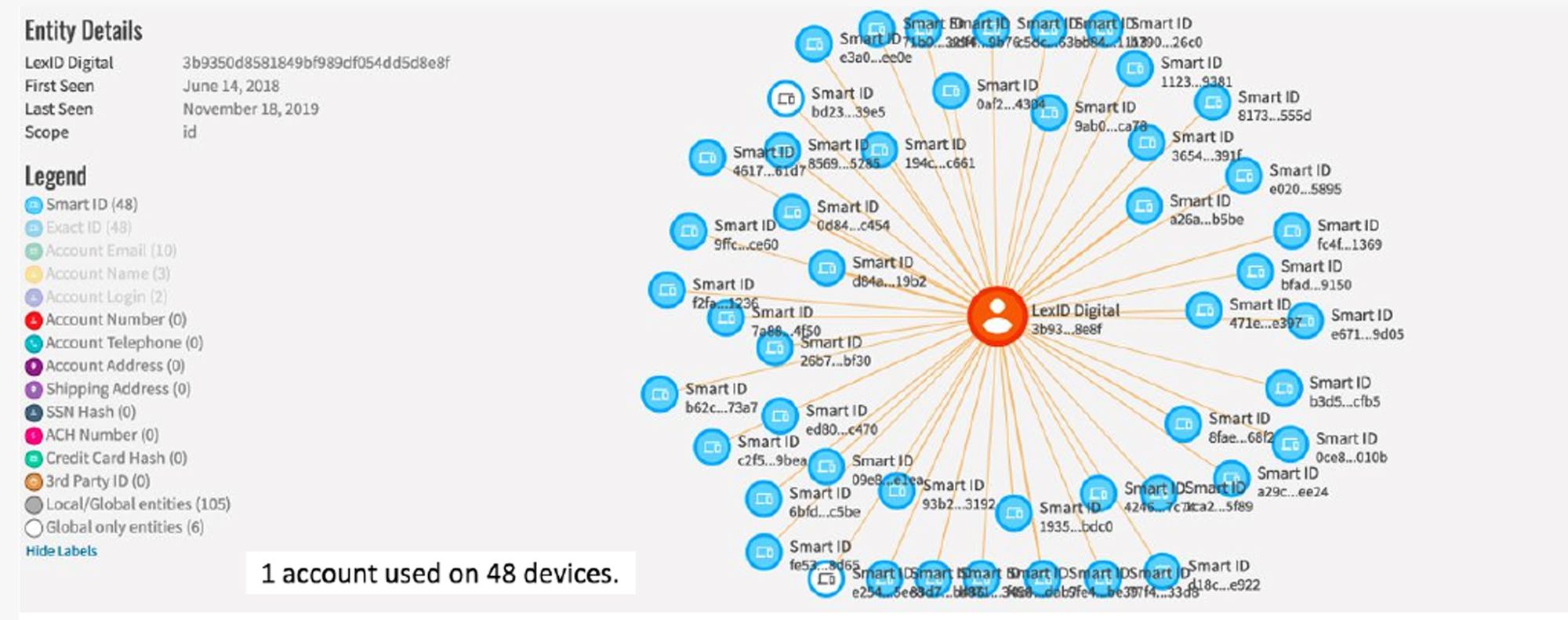

Chegg’s platform was losing revenue and user trust due to unchecked service abuse, primarily through account sharing, credential theft, and content reselling. Over 60% of users engaged in some form of unauthorized access, and a single account was found linked to 48 devices.

This not only devalued subscriptions but also led to a surge in customer complaints, with 17% of support calls related to account takeovers. The challenge was exacerbated by the perception that sharing was harmless, forcing Chegg to find a way to curb abuse without alienating users or adding excessive friction to legitimate access.

WHAT IS THE CHALLENGE?

The challenge was to curb service abuse, rampant account sharing, credential theft, and content reselling without damaging the user experience or driving away legitimate customers. Many users saw sharing as normal or justified, making strict enforcement risky for retention. Security measures needed to be effective yet seamless, balancing fraud prevention with accessibility. Additionally, any solution had to be scalable, adaptable to evolving abuse tactics, and aligned with Chegg’s business goals of increasing subscriptions and improving customer satisfaction.

Show More: Additional ChallengesWHAT IS THE GOAL?

- Reduce service abuse by curbing unauthorized account sharing, credential theft, and content reselling.

- Protect legitimate users from account takeovers and security vulnerabilities.

- Improve platform security without adding excessive friction to the user experience.

- Increase subscriber growth by converting unauthorized users into paying customers.

- Enhance customer trust and satisfaction through better security and account control.

WHAT IS OUR METRIC FOR SUCCESS?

- Reduction in account takeovers – Decrease the percentage of customer support calls related to stolen or compromised accounts.

- Decrease in unauthorized account sharing – Track a decline in flagged multi-user accounts and excessive device logins.

- Increase in new subscriber sign-ups – Measure conversion rates from flagged sharers to paying users.

- Revenue impact – Drive a measurable lift in subscription revenue.

- Customer satisfaction (CSAT) improvement – Boost CSAT scores related to account security and user trust

- Adoption of security measures – Measure user engagement with MFA, device management, and other security enhancements.

WHAT IS THE PROCESS?

The project was very fast moving and high priority as soon as the red flags were identified and the quant data was synthesized. Leadership decided to make the top priority for the Identity & Access Management team, Trust & Safety team and Security team. Service Abuse was then broken into 4 phases with separate execution timelines,

- Phase 1 – Detention

- Phase 2 – Device Management

- Phase 3 – MFA via Email

- Phase 4 – MFA via App

The design process for each section was broken down into variants following the design thinking phases:

- Discovery Phase (Understanding and gathering phase of Quant Data, Customer Service Interviews, Qualitative research and Competitive Analysis)

- Ideation Phase (Low fidelity & high fidelity variants based on product team feedback, leadership visibility meetings and UX/design system feedback reviews, and real user early signal testing feedback)

- Refine Phase (Revisions based on user feedback, stakeholder feedback and A/B testing data feedback)

- Rollout Phase (Once all 4 phases released, post rollout data, deployment strategy, instrumentation tracking)

↳ DISCOVERY (empathize and define phase)

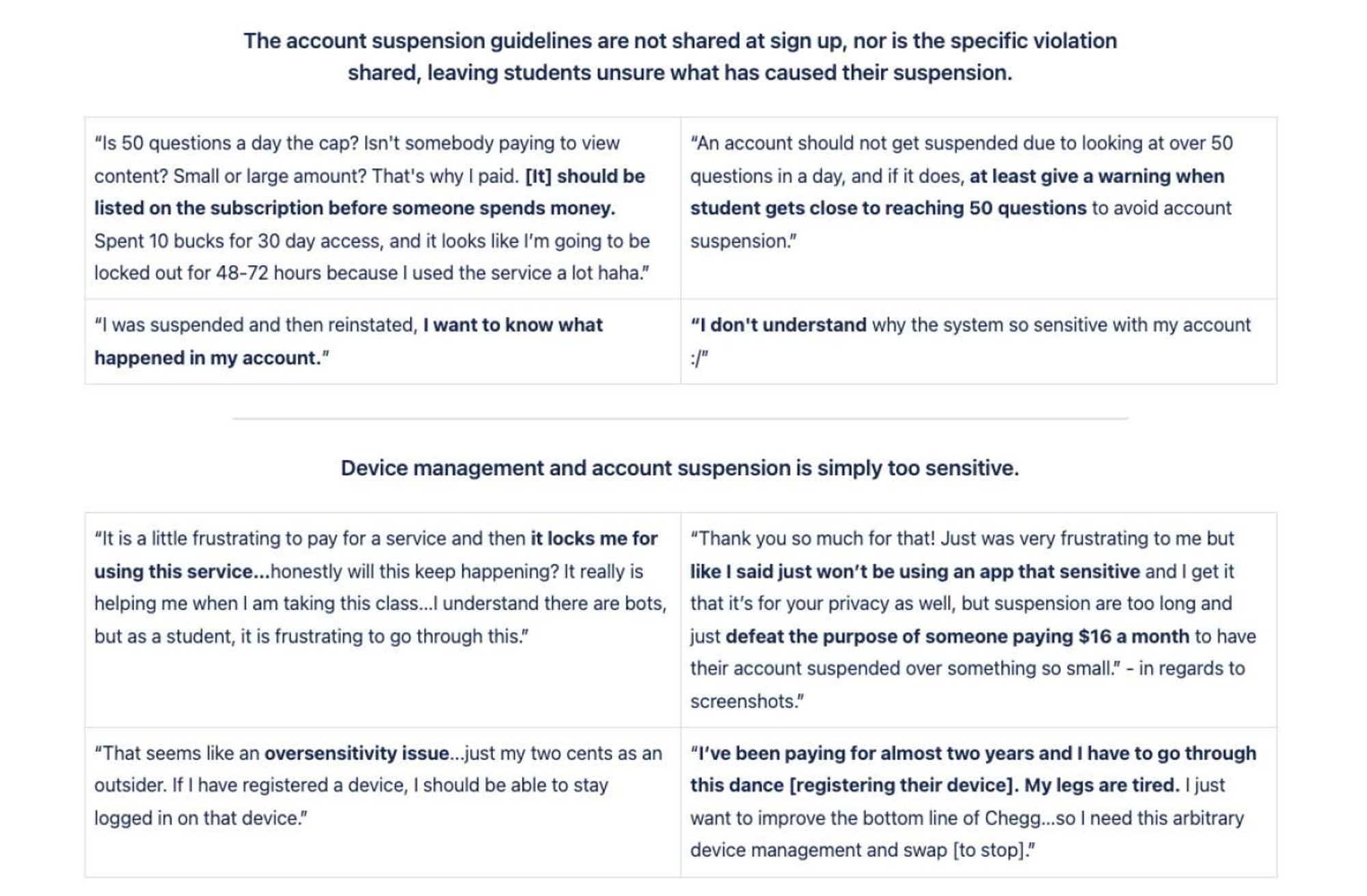

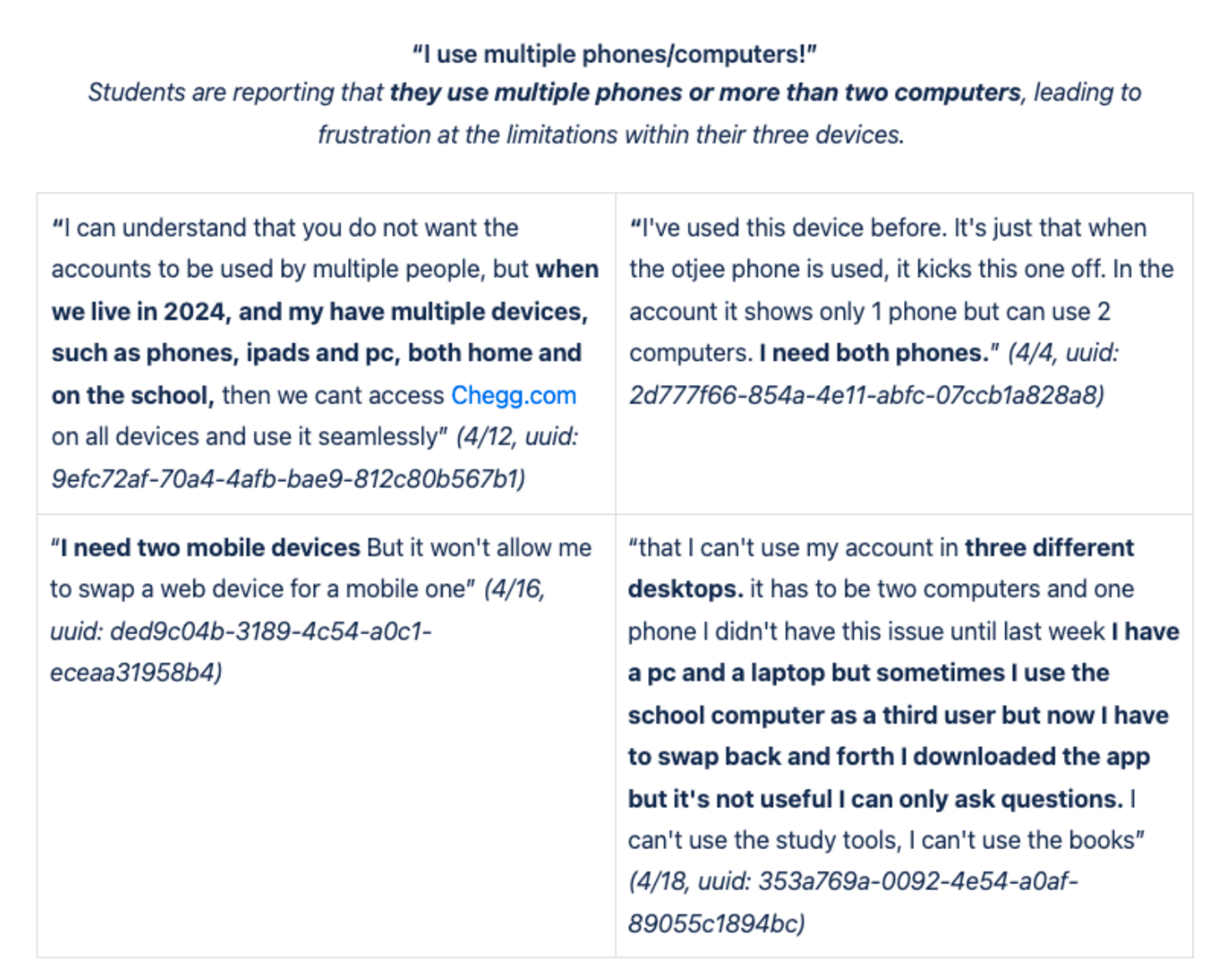

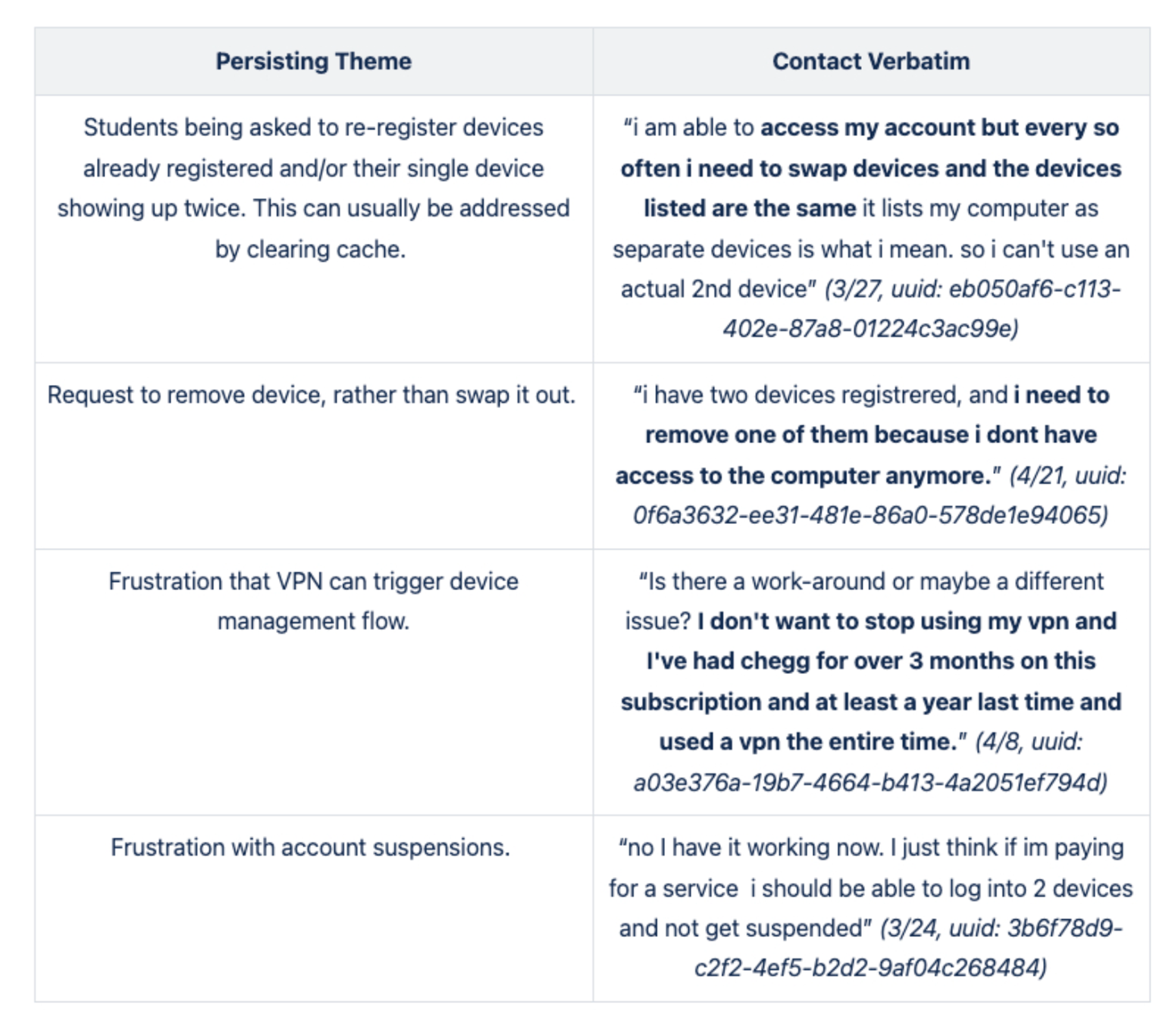

The process began with a deep dive into quantitative and qualitative research to uncover the extent and impact of service abuse. I analyzed platform analytics, customer support logs, and security data to quantify unauthorized access and identify common abuse patterns. Interviews with affected users, including both victims of account takeovers and those engaged in sharing, provided insights into motivations and pain points. This research revealed key challenges: widespread sharing behavior, trust erosion due to security vulnerabilities, and a perception that sharing was harmless. These findings shaped the problem definition and set the foundation for potential solutions.

Show More: Detailed data for the UX research interviews can be seen below in this toggle↳ IDEATION (ideate, prototype & test phase)

With a clear understanding of the problem, I explored intervention strategies that balanced security with usability. Multiple solutions were considered, including user education, deterrents, and stricter authentication methods. The team mapped out a phased approach, starting with low-friction deterrents like warnings and detentions before escalating to stronger measures like device registration and multi-factor authentication (MFA). Design explorations focused on minimizing disruption to legitimate users while discouraging abuse. Early concept validation through internal critiques and stakeholder alignment helped refine which interventions had the highest impact with the least risk.

↳ REFINE (revision and finalize phase)

Once key solutions were identified, I built and tested interactive prototypes to validate usability and effectiveness. Low-fidelity wireframes allowed for rapid feedback, while high-fidelity designs were tested with real users to gauge reactions. Early signal testing helped assess the clarity of warnings, the friction caused by security measures, and potential drop-off risks. Continuous iteration addressed usability concerns, such as simplifying device registration flows and ensuring MFA prompts were intuitive. Cross-functional collaboration with engineering, security, and customer support ensured the feasibility of implementation while maintaining a user-friendly experience.

↳ ROLLOUT (deployment and post-rollout data)

The final rollout followed a multi-phase deployment strategy to mitigate risk and measure effectiveness at each step. Phase 1 introduced warnings and detentions to nudge sharers toward compliance, followed by Phase 2’s device registration to limit unauthorized logins. Phase 3 and 4 added MFA layers via email and app authentication to enhance account security. Post-launch, the team closely monitored impact metrics, including subscription growth, security incidents, and customer satisfaction improvements. Regular feedback loops with customer support and real-time analytics helped refine the approach, ensuring that security measures remained effective without alienating legitimate users.

CONCLUSION

The multi-phased approach to addressing service abuse proved effective in reducing unauthorized access while maintaining user trust. By gradually introducing warnings/detention, device registration, and MFA, Chegg was able to curb fraudulent activity without disrupting legitimate users. Layering security measures allowed the platform to apply increasing levels of enforcement only where necessary, ensuring that legitimate users weren’t overwhelmed with excessive friction from the start. This progressive approach not only improved compliance but also helped users adapt to changes gradually. Ultimately, the initiative strengthened account security, improved customer satisfaction, and drove revenue growth through increased legitimate subscriptions.

FINAL METRICS

- 98% – reduced account takeovers by 98%.

- 17% → 1% – Customer support calls dropped from 17% to 1% for all calls related to compromised accounts

- 413K+ new subscriptions – 413K new subscriptions post-implementation of all phases (totaling $39M revenue increase)

↳ Learnings and Follow-ups

What went well:

- User research + analytics → Identified how and why users abused accounts, ensuring solutions targeted real behaviors, not just business assumptions.

- Data-driven alignment → Reduced subjectivity and helped teams prioritize the right problems faster.

- Multi-phase security rollout → Gradual introduction of security layers minimized user frustration and prevented churn while still curbing abuse.

What I’d Improve:

- Better user education → Many users saw security measures as barriers rather than protections; stronger in-product education and improved email campaigns could clarify the benefits.

- Rethinking enforcement → Some sharers unintentionally abused the system but were punished at stressful moments; alternative solutions like group discounts or multi-user plans could encourage voluntary compliance.

- Exploring behavioral nudges → More time collaborating with product teams could have led to less punitive, trust-building solutions that drive long-term retention instead of forced compliance.

Till this day, I still log and collect quant data feedback on account sharing through our analytics dashboard as well as attend a regular sync with the customer service team (student advocate team) collecting/discussing user feedback, aptly named “Customer Voice”. I keep an active Jira backlog of priorities ranging from p1s-p4s to keep as fast-follows or must-haves inclusions for the next phases.

Show More: user data behavior and verbatim customer feedback